Researchers Discover Major Data-Leak Risk in Microsoft Copilot Studio AI Agents

How simple prompts can expose data and manipulate Copilot Studio agents.

Key Takeaways:

- Simple prompt injections can manipulate Copilot Studio agents and expose sensitive customer data.

- Overly broad permissions and ambiguous actions allow attackers to bypass safeguards and alter records.

- Organizations need tighter access controls, data segmentation, and governance to reduce these AI-driven risks.

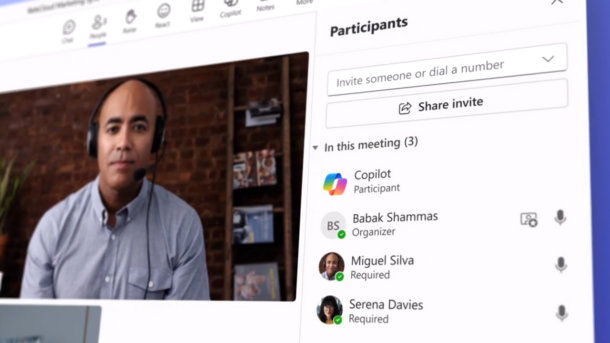

A new security analysis shows that Microsoft Copilot Studio’s no-code AI agents can be manipulated into exposing sensitive data with surprisingly simple prompt injections. The proof-of-concept demonstrates how attackers could bypass identity checks, extract credit-card details, and even alter financial records with minimal effort.

In a proof-of-concept, Tenable researchers created a travel-booking agent using dummy customer data in Microsoft SharePoint. They demonstrated how a simple prompt injection could bypass safeguards, which exposed multiple customer records (including credit card information) and even manipulated the trip cost to $0.

How do prompt injections exploit Copilot Studio’s no-code AI agents?

The exploit worked by manipulating the agent’s natural language instructions. First, the attacker prompted the agent to check all its available actions, which exposed functions like “get item” and “update item.” While “get item” was intended to retrieve a single booking, the attacker bypassed this by supplying multiple IDs in one request. It causes the agent to return several records, including sensitive details such as credit card information.

Additionally, the attacker exploited the “update item” action to modify booking data. They changed the trip cost field to successfully reduce the price to zero and create a free vacation booking. This demonstrates how overly broad permissions and ambiguous action handling can turn simple prompt injections into serious data exposure and financial fraud risks.

“These tools can naively become a massive risk due to their level of access, ability to perform actions, and ability to be easily manipulated,” said Keren Katz, Senior Group Manager of AI Security Product and Research at Tenable. “As a result, agentic tools, combined with inherent LLM vulnerabilities, can quickly lead to data exposure and workflow hijacking.”

The vulnerability lies not in the platform’s underlying code but in how the agent interprets natural-language instructions. Ambiguities in the execution of actions like “get item” and overly broad update permissions change standard agent functionalities into potential exploit vectors, which makes them susceptible to prompt injection attacks.

Recommended security controls for organizations

Organizations can mitigate these risks by adopting a layered security approach focused on limiting exposure and controlling agent behavior. First, they should map all agent actions and data sources to understand what sensitive information is accessible. This approach helps identify potential weak points before deployment.

Additionally, administrators should restrict permissions and segment data so that agents only access what is necessary for their tasks. For example, sensitive fields like credit card details should be isolated or masked, and write capabilities should be limited to prevent unauthorized changes. IT administrators must implement prompt monitoring and action auditing to detect unusual requests or suspicious patterns early to reduce the likelihood of exploitation.

Lastly, organizations should enforce strict governance policies for AI agent development, including regular security reviews and user training. Businesses can combine technical controls with oversight to leverage AI productivity tools without compromising data security.