Understanding the Windows Disk Storage Architecture

Troubleshooting Windows storage disk issues can be a very challenging endeavor. It often helps to have an understanding of what’s under the hood, so to speak, with regards to I/O requests, device drivers and redundant storage architectures. This article explores the fundamental Windows storage components and how they interact to provide high performance, reliable data storage.

Find out more at Cayosoft.com

Previously in an article entitled “Exploring Windows Storage Technologies”, we discussed the different storage architectures including DAS, NAS and SAN based solutions. This article provided a high level overview of the various ways storage can be configured on Windows servers. We will now dig deeper into what happens when a user issues a read or write request to a disk, and the potential failures that can occur during the process.

The I/O Request

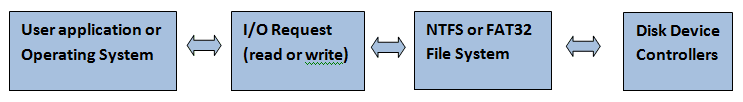

The process of reading or writing data from a disk begins with an I/O request from a user application or a component of the operating system. This request is typically a READ or WRITE request to either retrieve or store data in a particular file. Files are stored on disks utilizing various formats such as FAT32 or the NTFS file system. Finally, the actual magnetic bits on the disks are read or written to by the disk controllers. As you can see below, there are several layers of the Windows storage architecture that an I/O request must travel.

Storage Drivers

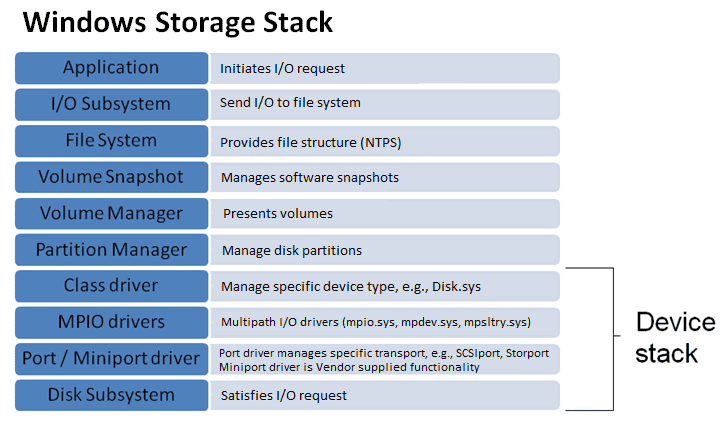

To accommodate the different layers of the storage architecture, kernel mode software called drivers are used to implement I/O requests. There are several types of storage drivers including class drivers, port drivers, miniport drivers, and filter drivers. There is also a group of drivers that ensure fault tolerance by utilizing multiple paths or redundant storage technology to avoid a single point of failure. The collection of drivers used to process an I/O request is referred to as the storage stack. The following diagram illustrates the Windows storage stack.

To better understand the flow, let’s trace a typical “read” request through the Windows storage stack. To begin with, a user application initiates the I/O request with a system service call to the NTFS file system (ntfs.sys driver). The NTFS file system will pass the request to any filter drivers that may be loaded. A filter driver is a driver that intercepts the I/O requests and performs additional checks or functions, like an anti-virus or volume snapshot driver.

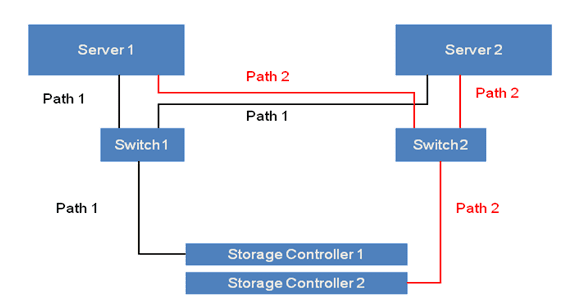

Once the filter drivers process the I/O request, the partition manager (partmgr.sys driver) locates the corresponding partition utilizing the disk class driver (disk.sys). Next, any multi-path drivers are used to establish the I/O path to the storage device if multiple paths are implemented. Microsoft provides a universal multi-path driver (mpio.sys) that other vendors leverage to provide redundant paths. The following example shows a multi-path storage configuration to avoid a single point of failure.

Next, the I/O request is handed off to the port driver (typically storport.sys or scsiport.sys). The role of the port driver is to process the I/O request by interfacing with the lower level vendor specific miniport drivers. The miniport drivers implement additional vendor specific functionality for the storage controllers. At this point, the data is read from the disk by the storage controller and is passed back up the storage stack to the user application.

Summary

The Windows storage architecture has developed over time to include several layers of abstraction. These layers are represented by kernel mode drivers that pass I/O requests through the storage stack. A variety of storage drivers may be installed including filter drivers, multi-path drivers and vendor specific device drivers. All of these drivers must be compatible with each other to successfully perform the I/O requests. Most storage issues result when a particular driver is upgraded that depends on another driver that is no longer compatible.

See related articles on extending the Windows storage architecture to include Failover Clusters utilizing SAN (2008 Failover Clustering and SAN) and iSCSI storage (Windows Failover Cluster and iSCSI Technology). These articles explore how storage solutions can be shared by multiple servers offering high performance and fault tolerance.