Workload Security in the Age of Agents: Why MCP Is a Control Plane Risk

MCP is the case study. Workload security is the real story.

Key Takeaways:

- Once AI agents are connected to real tools, untrusted input can become an execution path, turning prompt injection from a nuisance into an operational security risk with real-world impact.

- The core challenge isn’t preventing models from being tricked, but designing workload security so agent actions are tightly constrained, least‑privileged, and unable to cascade when manipulation occurs.

- Organizations rushing agents into production without maturing controls are entering a danger zone where automation becomes a new control plane, one that must be governed like privileged infrastructure, not experimental AI.

Security leaders have always had to manage “the next new thing.” The difference now is that the next new thing can take actions.

We are entering a phase where the most dangerous failures won’t start with an exploit. They’ll start with a model being convinced to press the wrong button.

That is the real shift behind the recent disclosure involving Anthropic’s Model Context Protocol (MCP) Git server. The headline may read like another vulnerability story, but the bigger lesson is architectural: once an AI system is wired into real tools, untrusted content can become an execution pathway.

This is not a standalone “AI security” problem. It is a modern workload security problem.

Why this matters now

In the last six months, I’ve watched three patterns emerge across security leadership conversations:

- Boards are asking “what’s our AI strategy” – and expecting agents in production within quarters, not years

- Early adopters are hitting real incidents – not theoretical risks, but actual cases where agents were manipulated into accessing systems they shouldn’t

- The blast radius question has no good answer – when I ask teams “if your agent were compromised today, what could it reach?”, I get blank stares

The gap between AI adoption velocity and control maturity is wider than anything I’ve seen since the early cloud migration days. The difference is that cloud misconfigurations usually exposed data. Agent misconfigurations can take actions.

We need to close that gap now, before the first headline-making incident forces reactive, innovation-killing lockdowns.

Workload security is a stack, not a single control

If you zoom out, most organizations are already operating a workload security stack that looks something like this:

This stack is the map, and MCP lives in it, not above it.

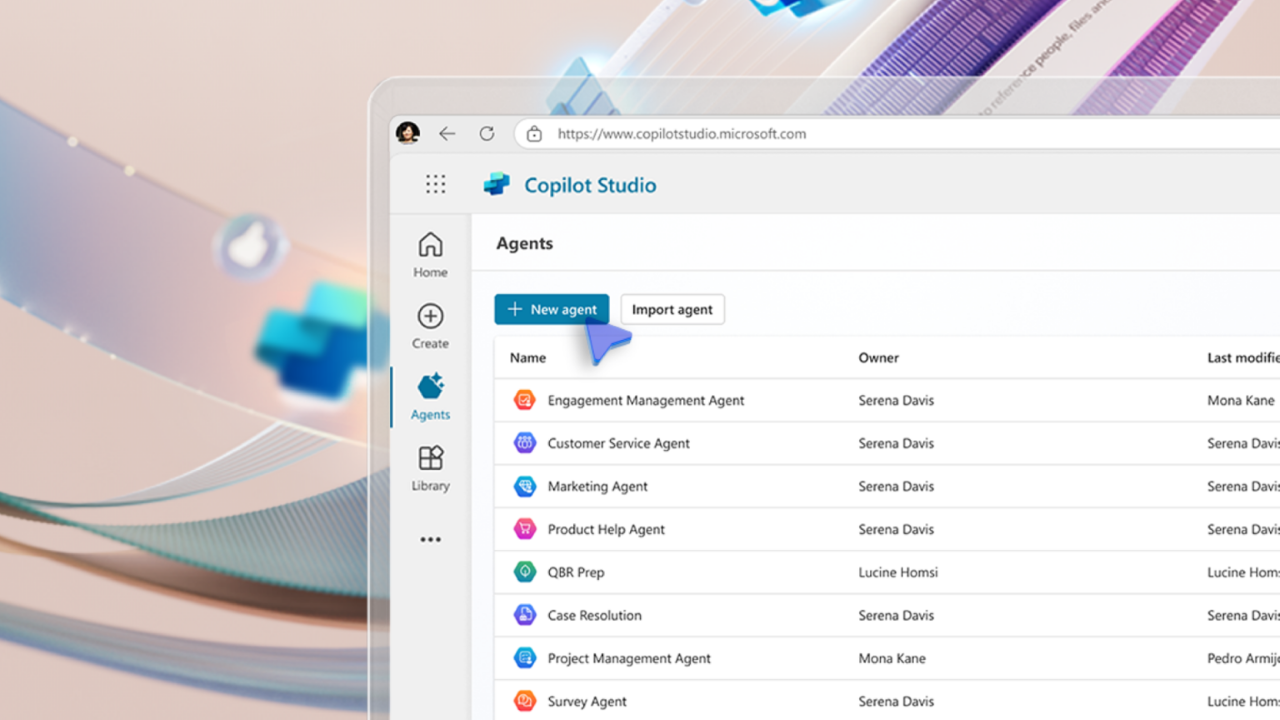

- AI Security: deployed models, agents, tools, MCP servers

- Application & Data Security: code scanning across IDE, VCS, CI/CD, cloud, SaaS

- Infrastructure Security: agent-based or agentless workload security and runtime signals

- Exposure Management: identify, assess, and reduce reachable risk across the environment

That picture matters because it highlights what changes with agents. AI and MCP may sit in the first row, but they touch all the other rows.

When a model can call tools, it becomes a new actor in the Software Development Life Cycle (SDLC) and a new automation path into the environment. In other words: a new control plane.

The most important question is not “are MCP servers safe?”

It is:

Are we designing our workload security stack so a manipulated automation path cannot turn into meaningful impact?

The MCP case study: when text becomes action

The Hacker News described three flaws in the MCP Git server that could enable arbitrary file read or delete and, in certain toolchains, code execution through argument and path manipulation.

Think malicious README instructions, a poisoned issue comment, or a crafted PR description that tells the assistant to “fix” something by running a command or editing a file outside the repo. The key detail is the exploitation model: prompt injection.

Prompt injection is not new. What is new is what happens when the model is connected to tools that operate on real systems.

The shift looks like this:

- Yesterday: untrusted text could trick a model into producing misleading output

- Today: untrusted text can trick a model into invoking tools with real permissions

When your agent can read repositories, modify files, interact with CI runners, or call cloud APIs, the boundary between “assistant” and “automation” disappears. At that point, the model’s toolchain is effectively privileged infrastructure.

Why CISOs should treat tool-integrated AI like a control plane

A simple mental model helps align executives and engineers:

- LLM output is not the decision

- Tool invocation is the decision

- Tool permissions define the consequences

This reframes how we prioritize controls.

The goal is not “prevent the model from being tricked.” Assume it will be tricked. The goal is to ensure the model cannot do high-impact things even when it is tricked.

If you want a CISO translation: this is the same reason we harden CI/CD, restrict production credentials, and segment sensitive environments. We do not rely on perfect behavior. We design so mistakes and manipulation do not scale.

Where most organizations are today

In my conversations with other security leaders, I’m seeing a clear pattern. Most organizations fall into one of three maturity stages:

Stage 1: Experimentation

- Agents deployed in development environments only

- Limited tool access, mostly read-only

- Ad-hoc approvals, no formal governance

- Risk: contained, but no path to production

Stage 2: Early Production

- Agents with real permissions in production workflows

- Tool integrations growing faster than security reviews

- Controls are reactive, not architectural

- This is the danger zone – you have operational risk without operational controls

Stage 3: Operationalized

- Agents treated as privileged infrastructure from day one

- Tool permissions designed for least privilege

- Runtime enforcement and segmentation in place

- Clear governance and blast radius containment

The gap between Stage 2 and Stage 3 is where most incidents will happen. Right now, many teams are sitting in Stage 2, moving fast because the business demands it.

The question isn’t whether you’ll deploy agents. The question is whether you’ll hit Stage 3 before you have an incident.

How MCP-style risk shows up across the workload security stack

This is where the bigger picture matters. MCP may be categorized as “AI security,” but the risk is multidimensional.

AI security

The agent can become an execution engine. Prompt injection moves from “annoying” to operationally dangerous when tool calls are enabled.

Application and data security

The agent becomes a new actor inside your SDLC. It can be pushed into pulling sensitive artifacts, modifying source, copying configuration files, or changing deployment logic. Even when code scanning is strong, automated edits can introduce unsafe changes faster than teams can review.

Infrastructure security

Tool execution often happens in developer endpoints, shared runners, or utility services. Those environments frequently contain credentials, mounts, and implicit trust. If the toolchain is not sandboxed and least-privileged, it becomes a shortcut around your controls.

Exposure management

The blast radius is defined by reachability. What repositories can it access? What internal endpoints can it call? What workloads can it reach east-west? If you do not constrain what the agent can reach, you are effectively expanding exposure with every new integration.

The takeaway is straightforward: agent tooling increases the number of ways actions can be triggered, and it compresses the time from influence to impact.

Agent hardening checklist

The response should be practical. Here is the checklist I would put in motion immediately, even if your organization is only experimenting.

1) Inventory agents and tools, not just models

You need a living map of:

- which assistants and agents exist

- what tools they can call

- where tool calls execute (developer machines, shared services, CI)

- what identities and scopes back those actions

If you cannot answer “what can this agent write to,” you do not yet have a defensible posture.

2) Treat MCP servers and plugins like high-risk software supply chain

Even “official” implementations can contain sharp edges. Operationalize that reality:

- pin versions, do not float

- patch quickly, with an explicit SLA

- track dependencies and generate SBOMs (Software Bill of Materials)

- harden runtime environments with sandboxing and filesystem isolation

3) Design for least privilege, then reduce more

Assume injection will happen, then make it boring:

- separate read tools from write tools

- constrain repo roots and mount points

- prefer short-lived credentials

- run tools in disposable sandboxes with no ambient secrets

4) Put guardrails on tool calls, not just prompts

Prompt guidance helps, but enforceable controls help more:

- allowlist tool commands and argument patterns

- require approval for high-risk actions (delete, write, init, credential access)

- use policy checks that evaluate tool calls with context (who, what repo, what path, what change)

5) Approve toolchains as bundles, not one component at a time

Risk emerges from combinations. A Git tool plus a filesystem tool can behave very differently than either alone. Approve bundles with a combined-risk review, then test them the way an attacker would.

Questions to ask your team this week

These are the conversation starters that will tell you whether you have a handle on this:

For your security team:

- Can we map every agent and assistant to the specific repositories, APIs, and systems it can access?

- What’s our current approval process for new AI tool integrations? Is it keeping pace with deployment velocity?

- If an agent were manipulated today, what’s our realistic blast radius? What could it reach that it shouldn’t?

For your engineering leadership:

- How are we tracking which teams are deploying agents with tool access?

- What’s our rollback plan if we discover an agent has been compromised?

- Are we designing agent permissions the same way we design service account permissions?

For your executive team:

- What’s our risk appetite for agents in production workflows?

- Who owns the decision when an agent needs access to production systems?

- How do we balance innovation velocity with blast radius containment?

If you can’t answer these questions confidently, you’re probably in Stage 2 maturity, which means you’re at risk.

Where this intersects with cloud security and CNSF

This is also where cloud security programs have to mature.

Many security stacks still assume the perimeter is the primary battleground. But most modern impact happens inside cloud environments: between workloads, across clusters, and along east-west paths that change constantly. Add agents to the mix, and the question becomes:

If an agent is manipulated into doing something unsafe, can that single action turn into uncontrolled lateral movement across workloads or uncontrolled access to sensitive systems?

This is the mindset behind the concept of a Cloud Native Security Fabric (CNSF). From a CISO perspective, the goal is simple: enforce workload-to-workload trust boundaries at runtime, inside the cloud fabric where workloads actually communicate, so blast radius stays small even when mistakes happen.

In practical terms, that means designing for runtime guardrails:

- explicit workload communication paths, not implicit trust

- segmentation that keeps pace with ephemeral infrastructure

- consistent enforcement close to runtime, where workloads actually communicate

Success looks like this: a manipulated agent can’t discover new paths, can’t expand privileges, and can’t move laterally.

That is the architectural conclusion:

Agent tooling makes it easier to trigger actions. Workload security has to ensure those actions cannot cascade.

The bottom line

The organizations that will win the next decade aren’t the ones with the most sophisticated AI models. They’re the ones who figured out how to let models take actions without letting those actions cascade out of control.

That’s not an AI problem. That’s a workload security problem. And it needs solving now.

This MCP disclosure should not trigger panic, and it should not slow down innovation. It should trigger discipline.

Agentic workflows are coming fast. The organizations that succeed will not be the ones that ban them or blindly adopt them. They will be the ones that treat them like privileged infrastructure, constrain execution, enforce least privilege, and backstop everything with runtime guardrails that assume mistakes and manipulation are inevitable.

The gap between your AI adoption velocity and your control maturity will define whether your next agent deployment is a competitive advantage or a security incident.

Which side of that gap are you on?