Top 5 Reasons to Deploy Windows Server 2016

In this article I will explain why I think that you should consider deploying the now generally available Windows Server 2016 (WS2016) in your network.

Smaller and Faster

Every version of Windows Server makes strides in improving the efficiency of the operating system (OS). Windows Server 2008 (W2008) introduced a new installation option called Server Core; Microsoft removed the Windows from Windows Server and left us with a server OS that only had a command prompt and a PowerShell prompt. This smaller installation required less RAM, had a smaller footprint, and had less of a surface area for attackers to target.

Windows Server 2012 continued this movement, and saw the kernel be improved with old code being reworked or removed.

And in WS2016, yes, we continue to get Server Core as an installation option, but we also get something newer, smaller, and with an increased emphasis on remote management and automation. Nano Server (an installation option, not an edition) doesn’t just remove the GUI, it removes the UI completely! Nano Server is a headless server OS, with the smallest disk requirement I can remember seeing with Windows Server, and consumes less than 200MB RAM when sitting idle!

If you want to run Hyper-V or Storage Spaces/Direct then you can use Nano Server, but where I see Nano Server being best used is for born-in-the-cloud applications, where you want to minimize resource usage, OS patching, and security vulnerabilities the most.

Improved Service Availability

A lot of the improvements in WS2016 were driven by improvements in Azure. Azure, Microsoft’s public cloud, has a lot of service-level agreements (SLAs) that dictate guaranteed uptime, so Microsoft is pretty sensitive to the issues that also affect us:

- Transient storage issues that crash virtual machines.

- Network glitches that last for seconds, but create minutes of downtime when virtual machines are failed over within a Hyper-V cluster.

- OS upgrades that require painful cluster-to-cluster migrations if you want a newer version of Hyper-V.

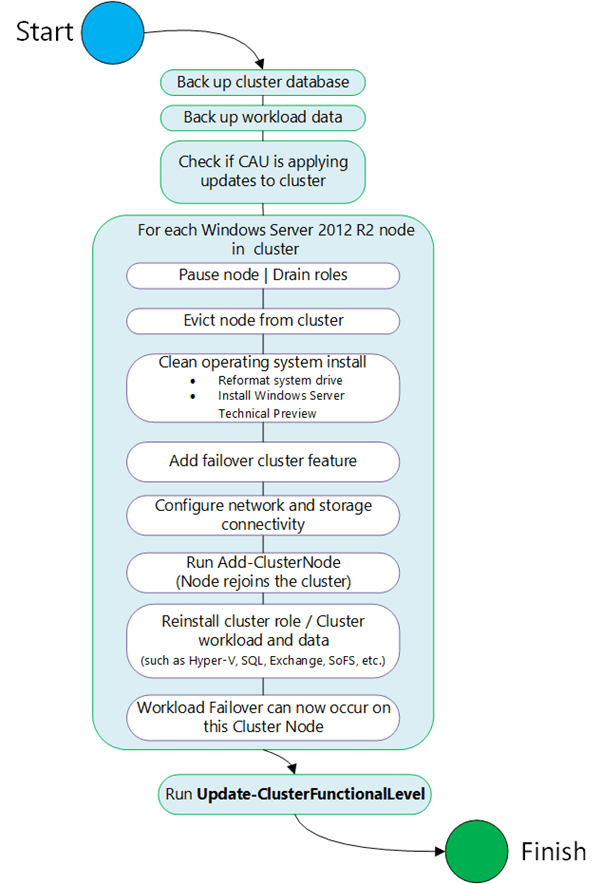

Microsoft built in several new features to improve service uptime. The first is rolling cluster upgrades, allowing us to painlessly upgrade Hyper-V clusters from WS2012 R2 to WS2016. A cluster can temporarily run both versions of Hyper-V, with virtual machines capable of live migrating or failing over across the mixed-level cluster.

A True Hybrid Cloud

Microsoft argues that a hybrid cloud is more than just a network connection between a customer’s LAN and a cloud, such as Azure. Hyper-V is the common foundation of on-premises private clouds, hosted public/private clouds, and Azure. Software-defined storage and networking abstract the complications of physical infrastructure. In terms of deployment and management, the Azure Portal and Azure Resource Manager (ARM) will be made available to customers via a new product (in mid-2017) called Microsoft Azure Stack. The solution will mean that developers and operators can use the same tools, the same architectures, and the same templates (solutions and virtual machines) no matter where they choose to deploy new services.

Secure & Trusted

You are already planning your deployment of WS2016 if security is important to you. Some features have made their way over from Windows 10 Enterprise; Credential Guard hides LSASS in a special Hyper-V partition called VSM, protecting stored administrator rights from malware behind a hardware-supported security boundary. Device Guard protects critical parts of the kernel against rogue software, ensuring that what is running is what is meant to be running.

Those that are running Hyper-V in a sensitive environment can deploy some very interesting functionality. A Host Guardian Service (HGS) can be deployed into an isolated environment; this enables a Hyper-V feature called shielded virtual machines. A host is checked for health (for example, root kit malware) when it boots up, and virtual machines are only allowed to start on or live migrate to healthy and authorized hosts — this prevents virtual machines being run on unauthorized or compromised environments. Shielding can also prevent KVPs (host-guest integrations) and console access to a virtual machine. Owners of virtual machines might be sensitive to unwanted or unauthorized peeking by administrators; virtual TPM allows the tenant to encrypt their virtual machine’s disks using BitLocker so that no one without guest admin rights can peek at the OS, programs, or data in the virtual hard disk files.

Solving Storage Challenges

Hurricane Sandy made quite the impact in 2012 on the U.S. and on Microsoft; the software maker noted that many SAN customers didn’t have DR solutions. Microsoft asked why this was, and those affected customers said that the licensing to enable replication for their vendor’s SAN was too expensive. WS2016 adds Storage Replica (SR), enabling you to replicate volumes at a block level from one storage system to another (both the same or different) using synchronous (short distance, no data loss) or asynchronous (longer distances, small data loss) replication. The solution is fully supported by Failover Clustering, so it allows from some interesting stretch-cluster designs. Admittedly, some storage systems (such as those by Dell) include free or very affordable licensing, so SR might not be attractive (at first, but wait to see what Azure might offer later) to those customers, but there are horror stories with other brands of SAN.

The big news in WS2016 storage is Storage Spaces Direct, which is the newest version of Microsoft’s software-defined storage system that was introduced in WS2012 and improved in WS2012 R2. This is a longer conversation, but I’ll keep it short. You can deploy a hyper-converged infrastructure using WS2016 Hyper-V, without giving more than $60,000 to some hardware company for each Hyper-V node, and get better and more stable performing solutions than many have offered in recent years. For example, DataON recently announced that it hit 2.4 million IOPS on a 4-node cluster. If you want simpler, more affordable, and better performing Hyper-V/cloud storage, then WS2016 has something to offer you.

![Storage Resiliency prevents Hyper-V virtual machine crashing during transient storage outages [Image Credit: Microsoft]](https://petri-media.s3.amazonaws.com/2016/10/VM20Storage20Resiliency20Workflow1.png)

![The hybrid cloud, according to Microsoft [Image Credit: Microsoft]](https://petri-media.s3.amazonaws.com/2016/10/image-41.png)

![The HGS authorizing hosts to run Hyper-V Shielded Virtual Machines [Image Credit: Microsoft]](https://petri-media.s3.amazonaws.com/2016/10/Workflow1.png)