Results of Aggregating Azure Premium Disks

In this article, I’ll show you the how performance improves when you add additional data disks based on Premium Storage to an Azure virtual machine.

In a previous article, I shared the performance gains that I recorded while adding data disks based on Standard Storage. My tests supported Microsoft’s claims that you can get linear growth by adding HDD-based data disks. My findings contradicted many blog posts out there, which claimed that Azure administrators got diminishing results by adding data disks. Satisfied that I squashed those claims with Standard Storage, I decided to repeat the tests using the trickier-to-test Premium Storage.

Premium Storage Refresher

Microsoft allows you to deploy OS and data disks onto shared SSD back-end storage using Azure Premium Storage. This provides virtual machines with two benefits:

- Lower latency

- Much higher IOPS

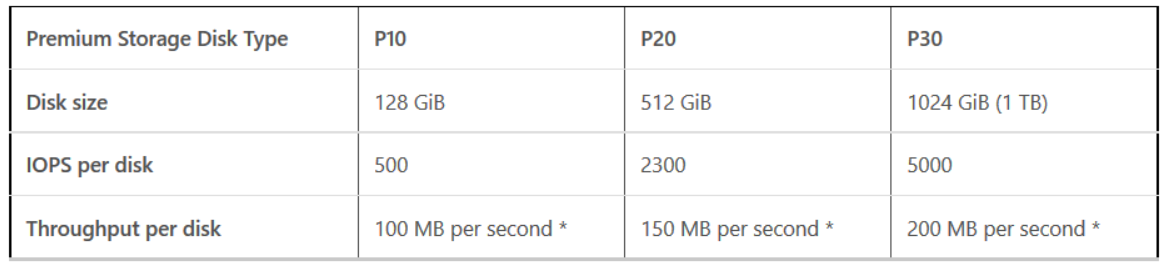

There are three specs of disk that you can deploy in Premium Storage. Note that you are not restricted to these sizes; a deployed disk is rounded up to determine the performance and pricing of that disk.

You can choose to use Premium Storage disks with DS- and GS-Series virtual machines. There are two things to note about going up the Azure virtual machine specification ladder:

- The higher the spec; the more data disks that are supported.

- Depending on your data disk design; a virtual machine’s assigned Premium Storage might exceed the performance potential (IOPS and throughput) of the virtual machine’s specification. You can see how performance potential increases as you increase the spec of DS-Series virtual machines in the below table.

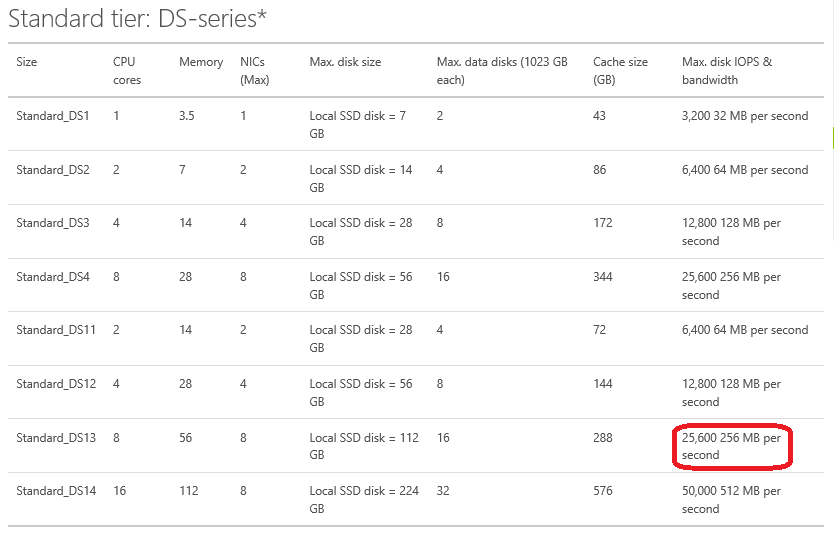

Below you can see the finial configuration of the test machine with 4 x P30 Premium Storage data disks:

The Test Lab

For the most part, the tests that I ran for this article are very similar to those I ran for Standard Storage. I deployed a DS-Series virtual machine with the OS disk stored on Standard Storage. I ran four different tests based on the quantity of assigned data disks (caching disabled):

- 1 x 1023 GB P30 data disk.

- 2 x 1023 GB P30 data disks, aggregated using Storage Spaces.

- 3 x 1023 GB P30 data disks, aggregated using Storage Spaces.

- 4 x 1023 GB P30 data disks, aggregated using Storage Spaces.

Storage Spaces was used for the 2-to-4 data disk configurations to aggregate storage space and performance; a configuration of simple virtual disks with 64 KB interleaves was used. In all tests, the single data volume was formatted with NTFS and a 64 KB allocation unit size. This architecture aligns the storage through the various layers.

The key difference between the Premium Storage and Standard Storage tests was the specification of virtual machine that was used. I could have used a DS2 machine, but that would have limited IOPS to 6400. Each P30 should add 5000 IOPS, so a DS2 would have been insufficient. Instead, I used a DS13 machine. Note that memory and processor were barely used in these tests; the resources of a DS2 would have been more than enough.

The Stress Test Tool

I used Microsoft’s free DskSpd to conduct the stress tests, while observing Logical Disk/Disk Reads/Sec using Performance Monitor. The results of the test were produced by DskSpd.

Diskspd.exe -b4K -d60 -h -o8 -t4 -si -c5000M e:\io.dat

The test stressed the data volume (E:) with 4 K read IOPS for 60 seconds. I could have used 64 K or 256 K reads, or used read/write tests, but most storage specs are based on 4K IOPS, so that’s what I decided to use.

1 Data Disk

I deployed a single simple NTFS volume onto the single Azure Premium Storage data disk. I ran DskSpd and I observed that the disk was offering me more than the guaranteed 5,000 IOPS at times. DskSpd recorded an average of over 5,098 IOPS.

2 Data Disks

The previous data configuration was erased at the software layer and a second data disk was added. This, in theory, should give us 10,000 IOPS (2 x 5,000). Many third-party blogs claim this won’t happen, so I was keen to see what I would find with a correctly designed and aligned storage system. I deployed Storage Spaces and ran DskSpd. The tests returned with a result of over 10,200 IOPS, exceeding what Microsoft guarantees by just over 200 IOPS.

3 Data Disks

How will a machine with three data disks behave? If you expect non-linear improvement, then you’ll expect things to start falling apart at 3+ data disks. Once again, I wiped the storage configuration, added a third data disk, and reconfigured Storage Spaces to include all 3 data disks. I ran DskSpd again, and the machine achieved over 15,277 IOPS, which was more than the guaranteed 15,000 IOPS.

4 Data Disks

Once again, the storage system was dismantled and rebuilt with an additional fourth Premium Storage data disk. Now the 4 x P30 disks provided the machine with a 3.99 TB data volume on SSD-based storage with an alleged 20,000 IOPS. DskSpd stressed the volume and came back with … 20,069 IOPS.

Summary

The below chart shows that that adding Azure Premium Storage data disks can provide predictable, linear growth in IOPS; each P30 disk added in excess of the Microsoft-guaranteed 5,000 IOPS. This clearly contradicts some comments and blog posts that claim that assigning additional data disks provides diminishing performance gains.

- I used a carefully aligned Storage Spaces configuration

- 4K read IOPS were used, as is done by most storage manufacturers

Obviously results will vary with, say, 256 K reads/writes, which would be common for SQL Server workloads, but that would be true of all storage subsystems.

The only questions that I have left is: what sort of results might I get with a GS5 virtual machine that has been assigned 64 x 1023 GB P30 data disks, and can my MSDN Azure benefit subscription handle the costs of that test?

![The performance of a single Premium Storage data disk [Image credit: Aidan Finn]](https://petri-media.s3.amazonaws.com/2016/01/1PremiumDataDisk.png)

![The performance of two Premium Storage data disks [Image credit: Aidan Finn]](https://petri-media.s3.amazonaws.com/2016/01/2PremiumDataDisks.png)

![The performance of three Premium Storage data disks [Image credit: Aidan Finn]](https://petri-media.s3.amazonaws.com/2016/01/3PremiumDataDisks.png)

![The performance of four Premium Storage data disks [Image credit: Aidan Finn]](https://petri-media.s3.amazonaws.com/2016/01/4PremiumDataDisks.png)

![The linear growth of IOPS by adding Azure Premium Storage data disks to a virtual machine [Image credit: Aidan Finn]](https://petri-media.s3.amazonaws.com/2016/01/4KReadIOPSAzurePremiumStorageChart.png)