Performance Results of Aggregating Standard Azure Disks

In a previous post, I discussed why you might use more than one data disk to store data in Azure. This approach lets you exceed the limit of 1023 GB per volume, and it provides a multiplier effect for performance. A reader commented on one of my posts that the multiplier effect wasn’t quite so clean as Microsoft might have us believe, and there are more than a few bloggers out there with the evidence to back up that statement. I decided that I needed to test this for myself, tuning the disks and Storage Spaces to match the stress test tool that I would be using.

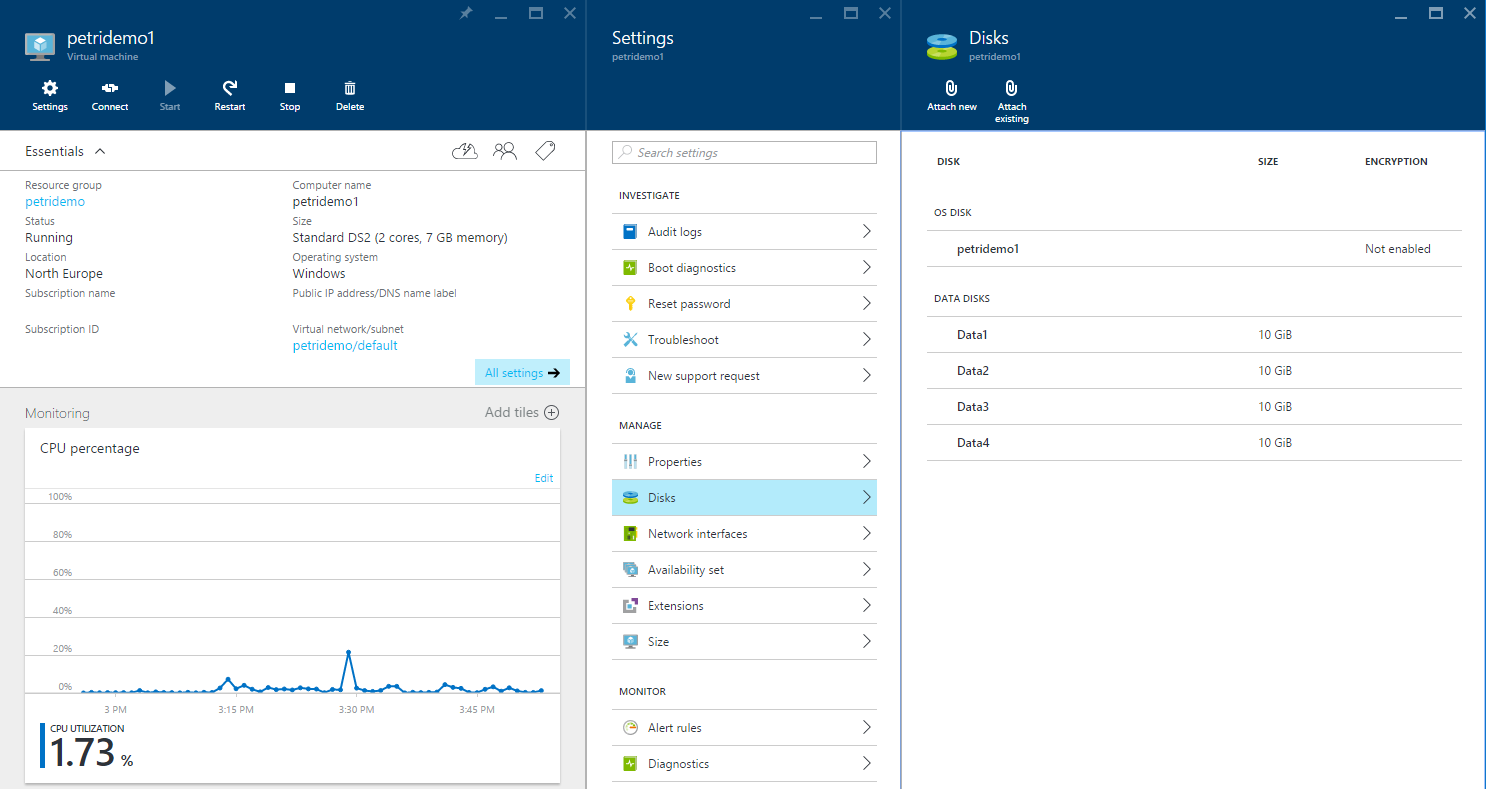

The Test Lab

I deployed a DS2 virtual machine using Azure Resource Manager in North Europe. This machine runs on an Intel Xeon processor host with two virtual processors and 7 GB RAM, where about 1.7 GB was used.

I assigned several Standard Storage data disks with no caching to this machine to create four different tests:

- 1 data disk

- 2 data disks, aggregated using Storage Spaces and a single virtual disk/volume.

- 3 data disks, aggregated using Storage Spaces and a single virtual disk/volume.

- 4 data disks, aggregated using Storage Spaces and a single virtual disk/volume.

Each data disk was deployed into a different Azure Storage account to ensure that no storage account could be a bottleneck on the scalability of performance.

Storage Spaces was used to aggregate multiple data disks; a configuration of simple virtual disks with 64 KB interleaves was used. In all tests, the single data volume was formatted with NTFS and a 64 KB allocation unit size.

I used Microsoft’s free DskSpd utility to conduct the stress tests, while observing logical disk, disk reads/sec by using Performance Monitor. The results of the test were produced by DskSpd.

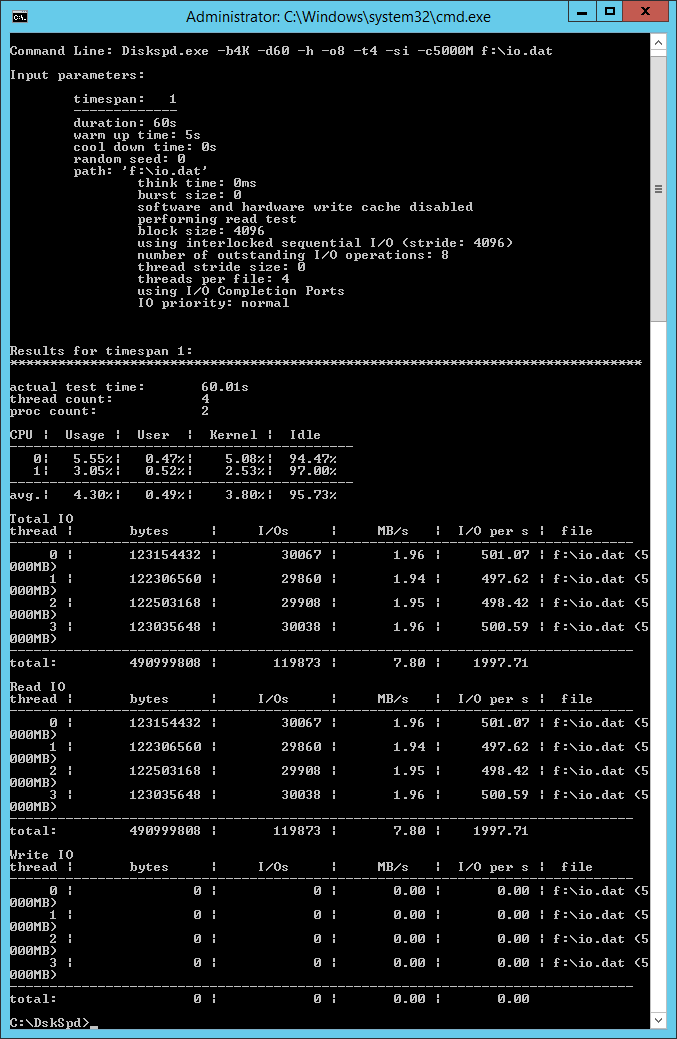

Diskspd.exe -b4K -d60 -h -o8 -t4 -si -c5000M f:\io.dat

Note that 64K is a constant in the configuration:

- 64 KB interleave in Storage Spaces

- 64 KB allocation unit size in the volume format

This configuration lets us optimize the test by allowing the 4K reads to be aligned.

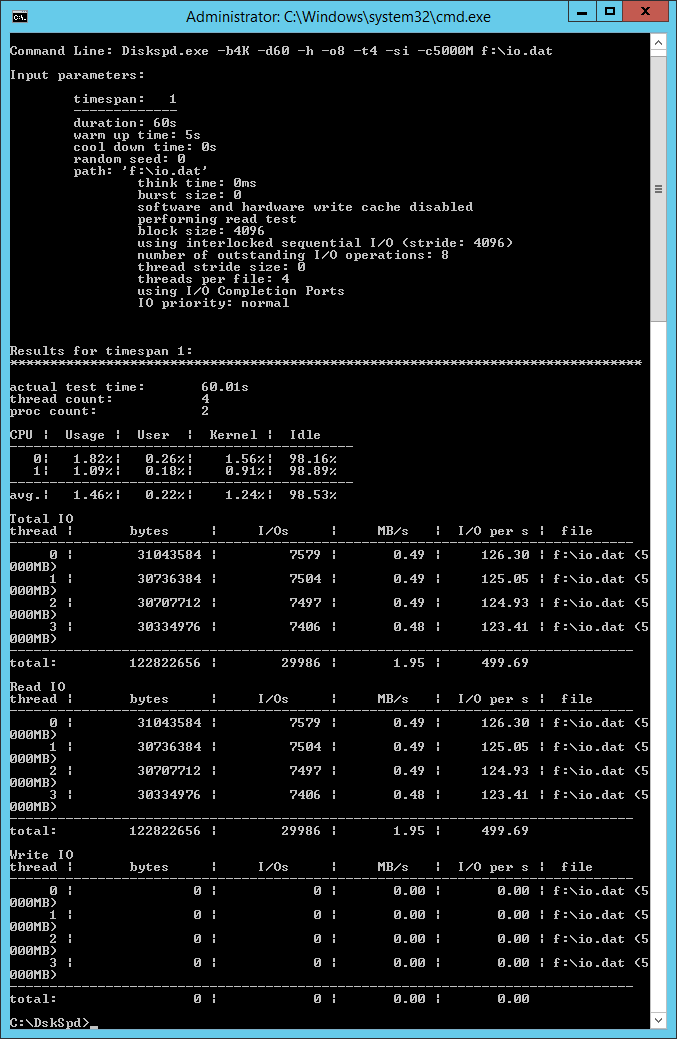

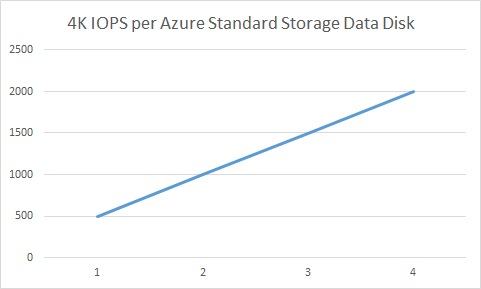

Single Data Disk

A single standard data disk was assigned to the virtual machine and formatted as F: as detailed above. DskSpd was executed and produced 499.69 IOPS, almost the 500 IOPS that a Standard Storage data disk should provide. I can accept that. This means that my baseline test should be good.

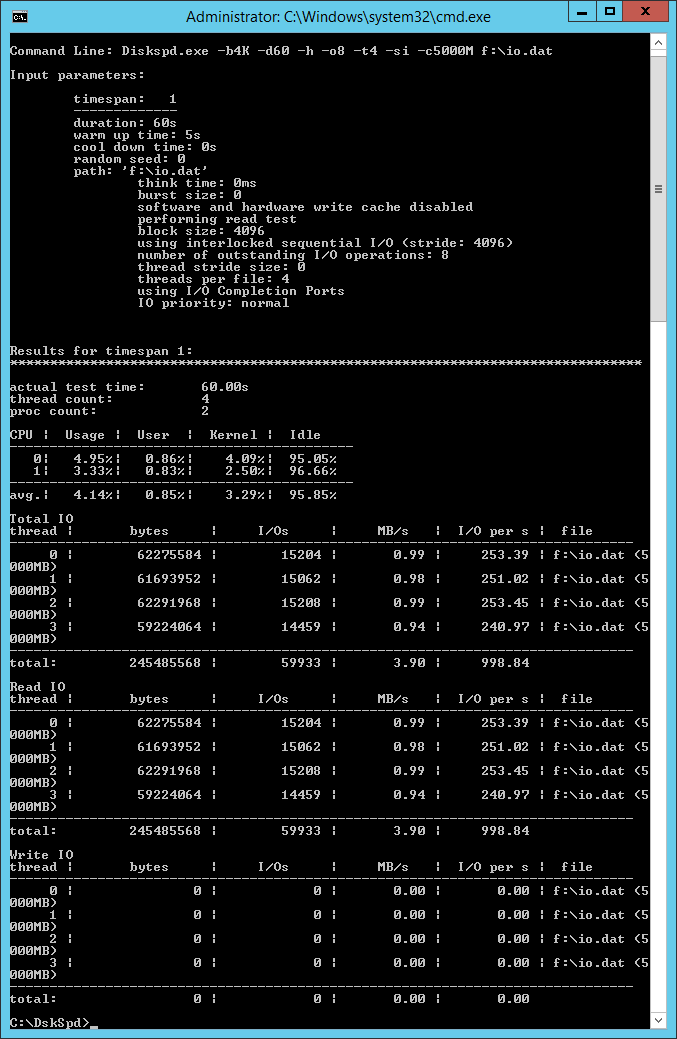

Two Data Disks

If I want 1000 IOPS, then in theory I should deploy two x Standard Storage data disks, each capable of 500 IOPS and aggregate them using Storage Spaces. This test should tell us what’s really possible.

Two data disks, each in different Standard storage accounts were assigned to the virtual machine and aggregated into single simple Storage Spaces virtual disk. I ran DskSpd and got 998.84 IOPS. So, I got roughly double the IOPS by doubling the number of disks, exactly as expected.

Note that when I messed around with DskSpd, I did get much lower results. For example, I got 793.02 IOPS with 64 K reads instead of 4K in the documented tests.

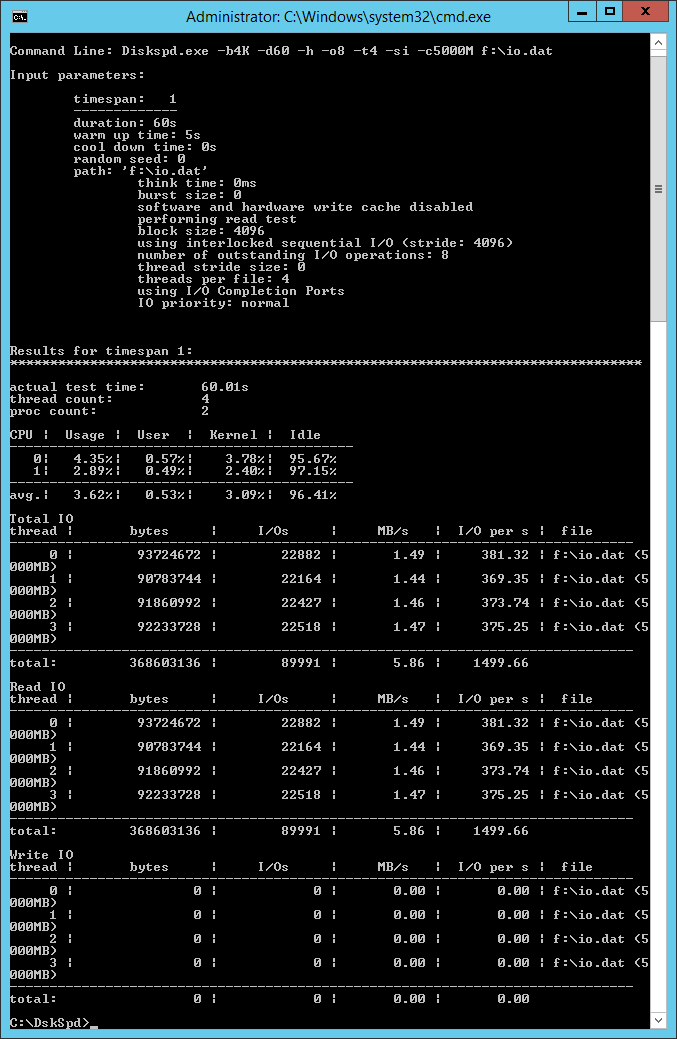

Three Data Disks

I destroyed the Storage Spaces configuration, added another data disk in a third Standard storage account, and rebuilt the storage. I expected three times the performance of a single Standard Storage data disk, which is 500 IOPS. DskSpd reached 1499.66. So far, so good.

Four Data Disks

I decided to push this spec of DS2 machine to its max and added a fourth data disk in a new Standard Storage account. I rebuilt Storage Spaces and reran the tests. Once again, Azure performed as one would expect providing nearly four times the performance of a single Standard Storage data disk: 1997.71 IOPS.

Comparing Results

I observed linear growth as I added each 500 IOPS capable Standard Storage data disk; each disk added performance, as marketed and blogged by Microsoft. Note that performance wasn’t always at a flat 500, 1000, 1500, or 2000 IOPS. There were lows and highs, but the variance wasn’t too much. At times, the IOPS even exceeded the Microsoft-enforced maximum — but not for long before Storage QoS kicked in!

As I wrote earlier, I did do some digging around and found blog posts, some that were incredibly detailed, that claimed to have non-linear results. What’s different?

I’ve been working with, writing about, and evangelizing about Storage Spaces for over three years now. I’ve learned that there are so many variables for tuning a storage system, especially a software-defined one. And to be honest, I wasn’t getting great results in my tests until I aligned the stars. The trick is to make sure that your workload is aligned to your allocation unit and interleave size. As I adjusted my tests, I took the race team approach — change one setting, test, and compare. This approach allowed me to identify the exact effect of that change. Too many people get in a hurry and decide to change two or three things before testing, so they never know the true effect.

As for those that complain that this post is based on 4K tests, well, that’s what is used by every vendor to market their devices because that’s what gives the most performance.

The results of this test should put you at ease when it comes to sizing Standard Storage data volumes for Azure VMs based on performance. But please remember that you must also design the software side of the storage system (Storage Spaces and the volume’s file system) to match the expected workload, be it SQL Server, a file server, or a stress test tool.