What Is Non-Uniform Memory Access (NUMA)?

In this article I will provide a brief introduction to non-uniform memory access (NUMA), and I’ll explain how Hyper-V interoperates with the NUMA architectures of host computers.

Non-Uniform Memory Access (NUMA): Overview

Non-uniform memory access is a physical architecture on the motherboard of a multiprocessor computer. The architecture lays out how processors or cores are connected directly and indirectly to blocks of memory in the machine. Software such as Windows Server or Hyper-V must deal with this physical construction to offer the best possible performance for their services.

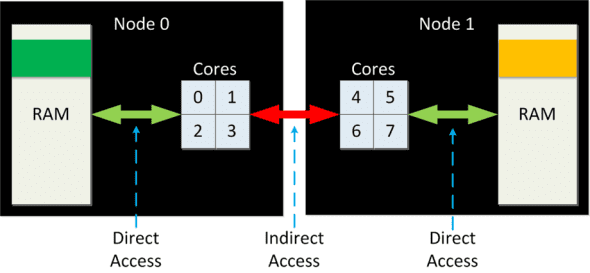

The below diagram illustrates a physical computer with two NUMA nodes. The cores and memory of the computer are split between these NUMA nodes. The cores (0-3) in Node 0 have direct access to half of the memory. The cores (4-7) in Node 1 have direct access to the other half of the memory. Note that the cores of each node have indirect access to the RAM in the other node.

If any process running on the cores of node 0 requests memory, a NUMA-aware operating system (such as Windows Server or Linux) or application (such as SQL Server) will do its best to assign RAM from the same node. This is because direct access to RAM offers the best performance. For example, let’s say the above machine is running Hyper-V and a virtual machine is running on the logical processors (LP) in node 0. If that virtual machine requests RAM, Hyper-V will always try to assign RAM from node 0.

On the other hand, if there is no RAM available in the same node, the application, operating system, or Hyper-V will have no choice but to assign RAM from another node. If our virtual machine, running in node 0, needs more RAM, and the only place to get that RAM is node 1, then Hyper-V will assign that RAM by default. There is a penalty in performance of that RAM, but it was assigned.

Have you ever noticed the instructions for your server telling you how to match up RAM with your processors? That’s NUMA in action.

Detecting NUMA Architecture

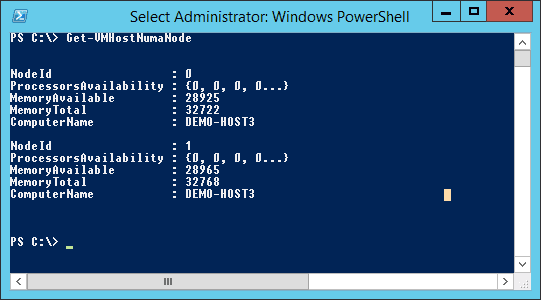

The NUMA architecture of the most common Hyper-V hosts is usually pretty simple: dual sockets (processors) = 2 NUMA nodes. But there are other ways to tell including:

- Running CoreInfo from Microsoft SysInternals

- Using Performance Monitor to query NUMA Node Memory \ Total MBytes

- Run the Get-VMHostNumaNode PowerShell cmdlet on a Hyper-V host

Hyper-V and NUMA

As you can see above, Hyper-V is designed to optimize how virtual machine processors and memory are assigned to get the best possible performance. There are a few features that have been added over the versions to optimize that performance:

- Disable NUMA node spanning

- Guest-aware NUMA

- Customizable virtual processor NUMA topology (to be covered in a later article)

Disable NUMA Node Spanning

When you enable Dynamic Memory (W2008 R2 SP1 Hyper-V and later) there is a chance that you will have many virtual machines constantly adding and removing tiny amounts of RAM from the host’s capacity. Hyper-V will try to keep virtual machines within their NUMA node, but if that node’s block of RAM is fully used, Hyper-V will have to span nodes and assign RAM via indirect access from another NUMA node. That can impact not only the performance of the virtual machine, but of the entire host.

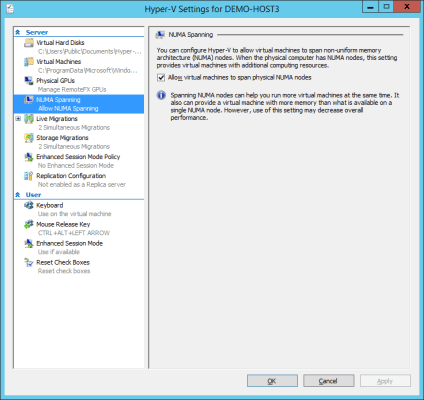

If you find a drop in performance in a host and can correlate that to NUMA node spanning via Performance Monitor (compare Hyper-V VM Vid Partition : Remote Physical Pages as a percentage of Hyper-V VM Vid Partition : Physical Pages Allocated per virtual machine) then you can disable NUMA node spanning in the host’s Hyper-V settings.

Guest Aware NUMA

Windows Server 2012 (WS2012) allowed us to create virtual machines with more than four (up to 64) virtual CPUs for the first time. However, this created a possible problem: The virtual CPUs (vCPUs) of larger virtual machines would be almost guaranteed to span NUMA nodes. How would the guest OS or guest services that are running in the virtual machine avoid spanning NUMA nodes?

A feature called Guest Aware NUMA or Virtual NUMA was added in WS2012. When you start a virtual machine (it must not have Dynamic Memory enabled) Hyper-V will reveal the physical NUMA architecture that the virtual machine is residing on to the virtual machine. The guest OS of the virtual machine will treat this virtual NUMA layout as if it was the NUMA architecture of a physical computer. The guest OS and any NUMA-aware services will assign RAM from within the virtual machine to align with the NUMA node of the associated processes.

Note: Virtual NUMA is disabled in a virtual machine once you enable Dynamic Memory for that virtual machine.