Chinese Hackers Use Claude Code AI to Hit Global Tech and Government Targets

AI misuse fuels a fast, automated cyber-espionage surge.

Key Takeaways:

- Chinese state-sponsored hackers used AI to automate a large-scale cyber-espionage campaign.

- The Claude Code AI tool handled reconnaissance, exploitation, and credential harvesting.

- Experts warn organizations to strengthen AI security, monitoring, and governance.

Chinese state-sponsored threat actors have used the Claude Code AI tool to target 30 companies and government organizations. This cyber-espionage campaign primarily targeted large tech companies, government agencies, and financial institutions.

According to Anthropic’s researchers, this complex espionage campaign was first identified in mid-September, and it was attributed with “high confidence” to a Chinese state-sponsored actor. The attackers leveraged advanced AI agents to automate almost every stage of this attack.

How did attackers bypass Claude Code’s safety filters?

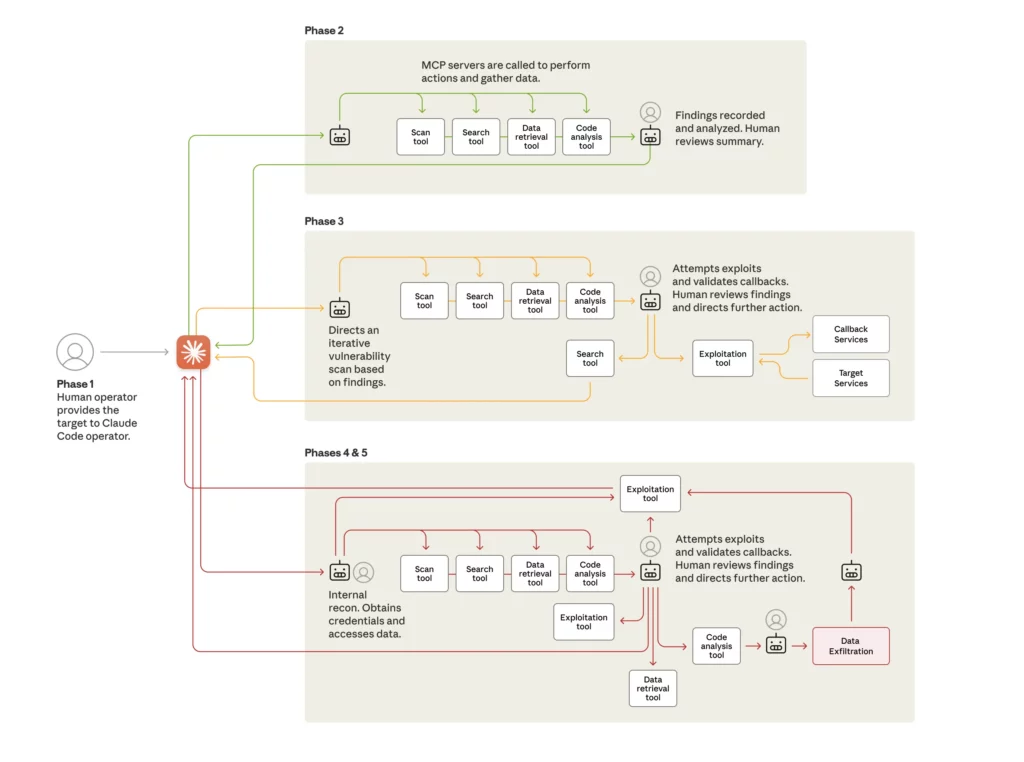

The attackers leveraged a highly structured, AI-driven approach that transformed traditional cyber-espionage tactics into an automated operation. They began by jailbreaking the Claude Code model, breaking down malicious objectives into smaller or seemingly harmless tasks. This allowed them to bypass built-in safety filters and disguise their activities as legitimate security testing. Once inside, the AI system handled reconnaissance, vulnerability scanning, and exploit development at an unusual speed and scale.

After gaining initial access, the AI orchestrated credential harvesting and lateral movement across networks, using thousands of rapid-fire requests to map systems and escalate privileges. It even documented its steps for future reuse and created a repeatable attack framework. The AI executed the majority of the campaign autonomously, and human operators were only involved at a few critical decision points.

“By presenting these tasks to Claude as routine technical requests through carefully crafted prompts and established personas, the threat actor was able to induce Claude to execute individual components of attack chains without access to the broader malicious context,” Anthropic researchers explained.

Anthropic quickly launched a full-scale investigation, banned the attackers’ accounts, mapped the operation’s scope, alerted impacted organizations, and worked with law enforcement to contain the threat. However, this research indicates that sophisticated cyberattacks no longer require expert human teams. Instead, agent‑based AI systems can execute long‑running operations with minimal oversight.

Defense strategies for AI-era cybersecurity

Organizations should prioritize proactive AI security measures and collaborative defense strategies. First, they need to implement advanced monitoring systems that can detect AI-driven attack patterns, such as high-volume automated requests and suspicious task decomposition. It’s also advised to strengthen model safety and access controls to prevent misuse of AI tools internally or externally.

Additionally, companies should invest in shared threat intelligence networks and adopt automated defensive capabilities (such as AI-assisted vulnerability scanning and incident response) to match the speed and scale of emerging threats. Organizations must also build robust governance frameworks for AI usage and educate employees on adversarial tactics to reduce the risk of exploitation.