Are Docker Containers Better than VMs?

Docker is an open-source technology that packages and distributes apps to run in isolated containers on Linux. Much like Solaris Zones and BSD Jails, processes running in Linux containers share the kernel and other key operating system (OS) components. In addition, these processes are portable and isolated from each other and environmental changes. For a high-level overview of Docker, see our What is Docker? article here on the Petri IT Knowledgebase.

Microsoft already supports Docker in VMs running Linux on Azure, but recently announced Docker-compatible containerization for Windows Server vNext, which will allow applications to be shared, installed and moved to any other compatible server. Docker has already proved so popular, that when it came out of beta earlier this year, some major financial institutions started using it in their production systems.

How Does Docker Work?

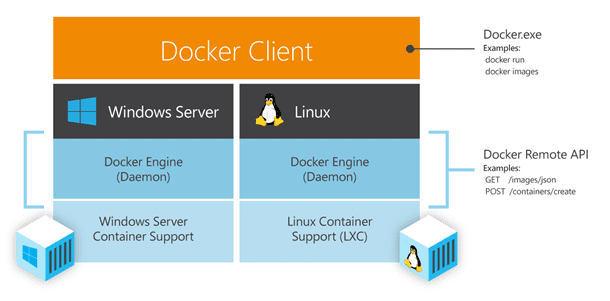

Using a client-server architecture, the Docker client talks to a daemon (service). The client and daemon can run on the same or different systems, and it’s the daemon that executes, builds and distributes app images.

Docker Images

At TechEd Europe 2014, Microsoft Azure CTO Mark Russinovich described Docker images as ‘layers.’ To create images, Docker uses a union file system, which allows several file systems to be mounted simultaneously but to appear as one. An app is installed or updated on top of a base OS image, and Docker creates a snapshot using Another Union File System (AuFS), documenting change branches that can be referenced systematically. This technology produces lightweight read-only images, as only differential changes since the last snapshot need to be distributed each time a modification is made to an app, instead of entire VMs.

Docker Registry (Hub)

The Docker registry, not to be confused with the system registry in Windows, is a place where Docker images can be stored. There is a public Docker Hub, which will also be supported in Windows Server vNext, and it’s possible to create on-premise private hubs. There are also private storage plans, allowing organizations to make images available in the public Docker Hub to a restricted set of users.

Linux Containers

Docker images tell Linux containers what processes will run and the files they will contain. The image remains read-only, but when a container is created, Docker adds a read-write layer on top, again using AuFS, and the application runs. The default container format is libcontainer, but Docker also supports Linux containers (LXC), neither of which provide the same hardware-level isolation as hypervisors, and are therefore not as secure.

Are containers better than VMs?

Containers run with lower overhead than VMs, are faster, and have a smaller footprint, which in turn makes them more portable, enabling organizations to improve performance, increase the density of apps running in datacenters, and speed up R&D. Containers are especially useful in continuous integration devops environments where application updates are rolled out at breakneck speed, as containers can be provisioned in seconds, rather than the minutes that are required for VMs.

Containerization technology isn’t going to replace Hyper-V in Windows Server vNext, but in the near future it’s likely that we’ll be provisioning fewer VMs and getting more bang for our buck in the cloud. There will be applications that are not compatible with container technology, so in the short to mid-term, VMs and app containers will be complimentary technologies.

How will Windows containers work?

Microsoft hasn’t yet disclosed anything about the container technology that will be built in to Windows Server vNext, other than it will be compatible with Docker. Some have speculated that it might be based on Drawbridge, Microsoft’s Library OS project that aims to provide containerization with the same security afforded by hypervisors.

Drawbridge is used internally by the Azure team for its Machine Learning (ML) platform, and Russinovich suggested that Drawbridge was superior to what Docker and Linux containers currently provide, ensuring that apps are fully isolated from each other. Although Canonical, who are responsible for the Ubuntu Linux distro, has a new project called Linux Container Daemon (LXD), which aims to build on Linux containers (LXC) to add security and isolation like a true hypervisor.

Considering the current developments in the containerization space, even if Drawbridge doesn’t find its way into Windows Server vNext, I’d expect the final solution to offer the security benefits of today’s hardware-based virtualization. Check out the Petri IT Knowledgebase for a closer look as soon as Docker support arrives in the Windows Server Technical Preview.