Accelerate Your Applications with Azure’s Front Door Service

Azure’s application delivery suite continues to grow as the Azure Front Door Service became generally available last week. Front Door Service is a global HTTP load balancing service you can place between your customers and your backend services to distribute traffic across different Azure regions, or across different cloud providers and even your own on-premises services. The goals of this new service are to make applications easy to scale, to make applications more reliable and resistant by providing instant failovers, and to improve the performance of applications by using intelligent caching and network optimizations.

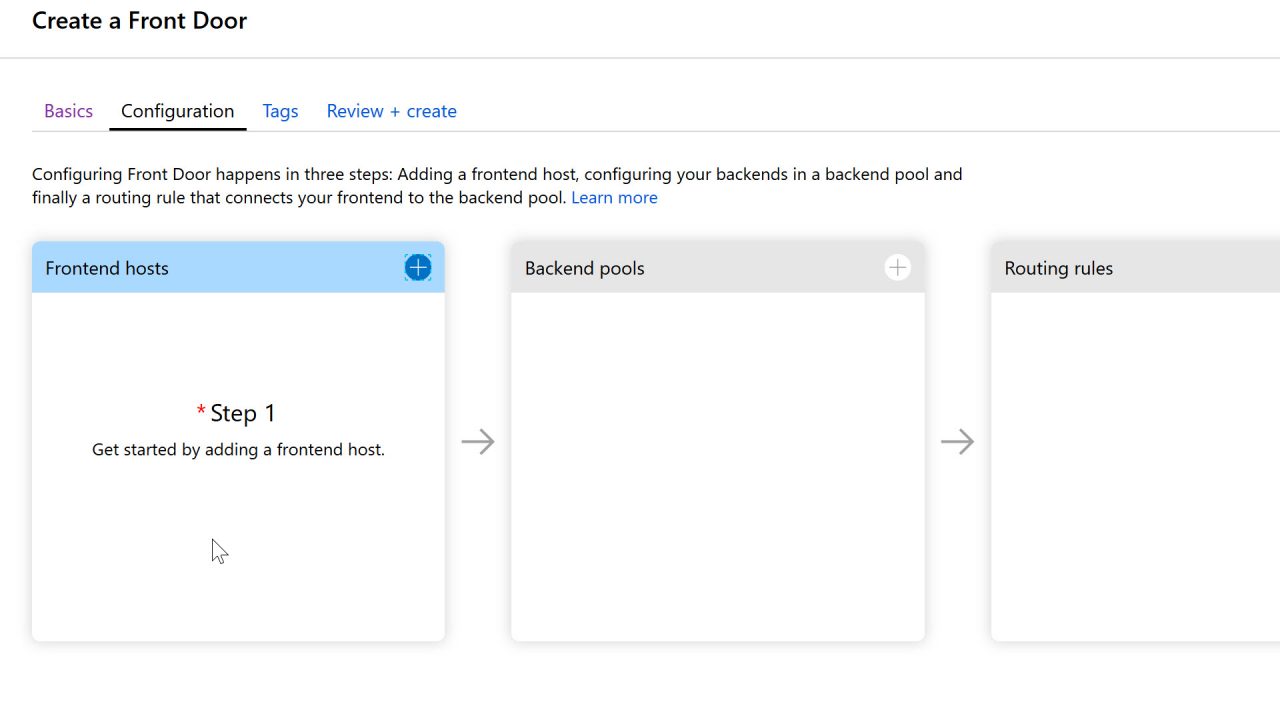

Let’s first look at how to configure a new Front Door Service.

Configuration

The configuration begins by selecting a Frontend Host. In this step you select the host name you wish to use in the domain .azurefd.net. The hostname you select is the hostname your customers will use to reach your services, for example, petri.azurefd.net. Front Door also supports custom domains. When creating the frontend host, you can optionally enable session affinity. With affinity on, a user session will always go to the same application backend for processing, if the backend is still available. Session affinity is similar to the Application Request Routing feature of App Services, which also relies on HTTP cookies.

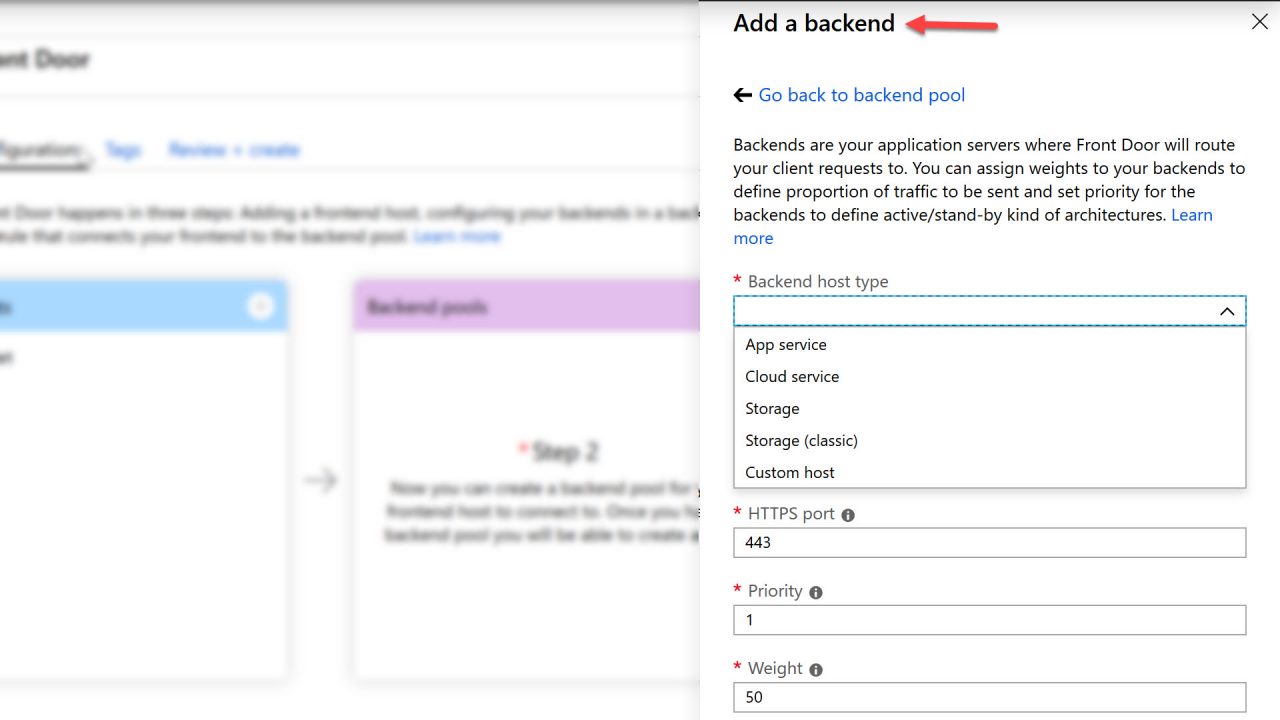

The second step in configuring Front Door is to add your backend pools. Backend pools are the application servers that Front Door will use as the destinations when routing your customer requests. You can add servers from your existing Azure App Services or Cloud Services, but you can also add custom hosts that exist in a different cloud provider or inside your own data center. For example, I could add App Services from two different Azure regions – petri-useast.azurewebsites.net in the east U.S. region and petri-eunorth.azurewebsites.net in the northern Europe region. When clients look for your application at petri.azurefd.net, Front Door will send the request to the backend that is closest to the customer. Front Door can also round-robin requests to backends with the least amount of latency, or use priority and weighted routing strategies.

To measure service latency and overall service health, you’ll also need to configure the health probes that Front Door uses with your backend pools. Basic health probe configuration includes the URL path for each probe request, as well as the protocol (HTTP or HTTPS), and the probe interval. For health checks, a 200 OK status response from your service tells Front Door that your service is healthy. Any other response is recognized as a failure.

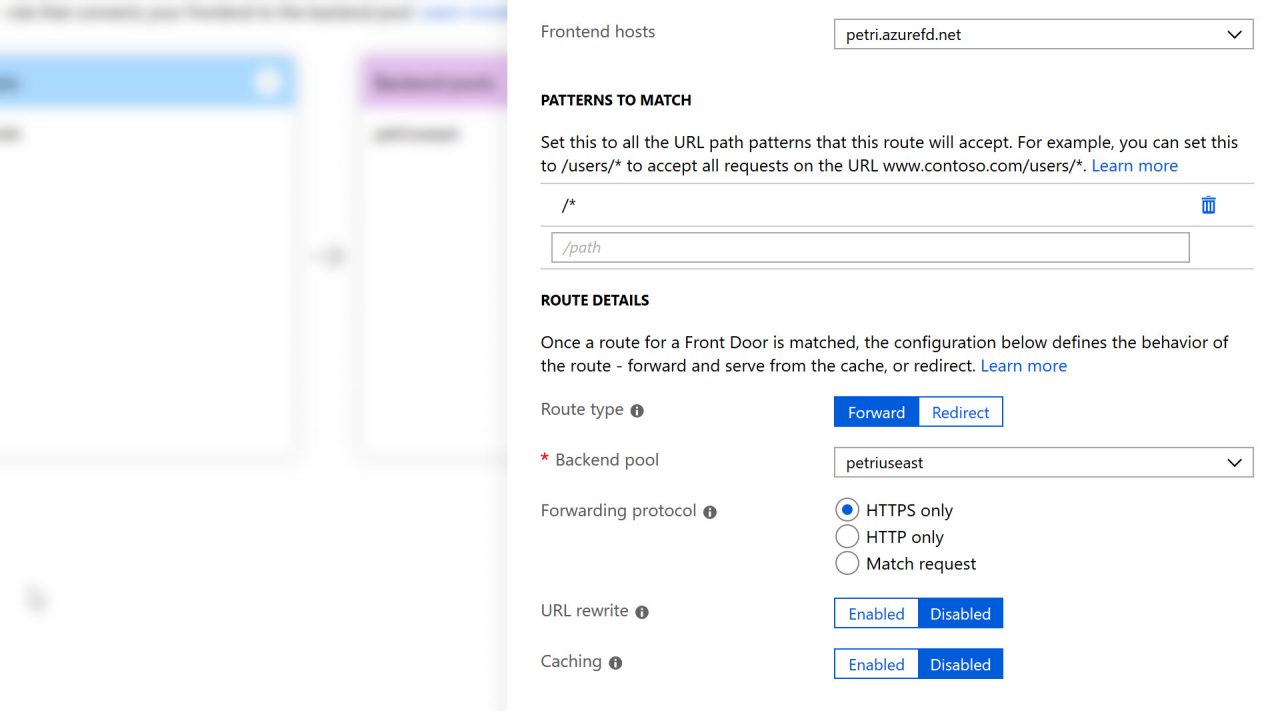

The last step in configuring a new Front Door is to add routing rules. With routing rules, you can configure path patterns to match, and then tell Front Door if it should forward or redirect a request matching the pattern. You can also configure a forwarding path to rewrite the URL, and enable caching for static content at a given path.

Azure Front Door and Azure Traffic Manager

If you’ve used Azure Traffic Manager in the past, you might wonder how Front Door and Traffic Manager compare, because Traffic Manager also provides a global load balancing service. The primary difference is in how Traffic Manager relies on DNS lookups to route customer requests to your backend services. If a service endpoint becomes unhealthy, a customer will need to wait for their cached DNS result to expire before failing over. The Front Door service provides faster failover support because Front Door is a reverse proxy and sits on the network between the customer and your backend services. As a reverse proxy, Front Door can also offer additional features that Traffic Manager cannot provide.

Front Door can cache your static content and directly return cached assets to your customers without a trip to your backend, and as a proxy, Front Door also offers features to accelerate your dynamic content. For example, Front Door allows you to offload SSL processing, and can re-encrypt requests to ensure end to end SSL connections. Front Door also uses network optimizations like Anycast and Split TCP. Anycast allows customers to reach a Front Door environment with the fewest number of network hops, while Split TCP can reduce latency by breaking connections into smaller pieces. Combine these features with standard reverse proxy features like dynamic compression, and Front Door provides dynamic site acceleration as a service.

Front Door is Microsoft’s Dog Food

If you start using Front Door today, you’ll be happy to hear the service comes with a 99.99% availability SLA. You’ll also be glad to know that Microsoft is already using Front Door themselves. High profile sites that you might use everyday, like Office 365, Bing, Azure DevOps, and LinkedIn already rely on Front Door services.