An Interview with Microsoft Azure CTO Mark Russinovich

Microsoft is at a crossroads these days. Impressive products like Windows Phone, the Xbox One, and even the newly-released Windows 10 Technical Preview are tangible proof that Microsoft is making better products and services than ever before. Yet the pace of innovation and the level of competition facing Microsoft is intense.

Windows Phone devices may be the technical equal of smartphones running iOS and Android, but Windows Phone lags far behind both in market share. The Xbox One suffered from a launch campaign that could serve as a textbook example of how not to launch a consumer technology product, and has been outsold by Sony’s PlayStation 4 game console since launch. Windows 10 looks promising as a very public apology for Microsoft’s “New Coke” moment, as Windows 8 was widely reviled by businesses and consumers alike. The two years Microsoft spent trying to convince people to buy Windows 8 was lost time, and even helped Apple and the oft-maligned Google Chromebooks realize retail market share gains against Windows PCs.

A Success Story: Microsoft Azure

Another competitive market segment is cloud computing, an area where Microsoft is mainly locked in a three-way battle for dominance with Amazon Web Services (AWS) and Google Cloud Platform. AWS is the clear market leader, Google was uncharacteristically late to the market and is therefore battling for third place with the likes of IBM Softlayer and others, but Microsoft Azure is steadily making gains.

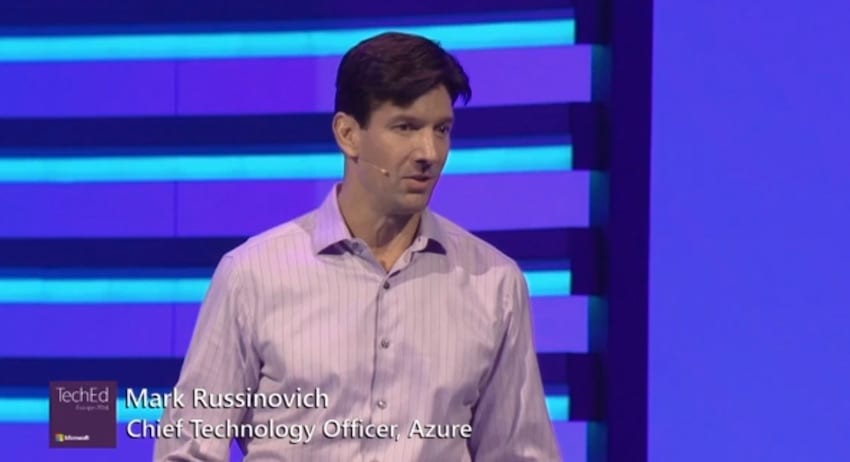

Microsoft CEO Satya Nadella deserves credit for helping build Azure into what it is today, but one of the most influential people on the Azure team is Microsoft Azure Chief Technology Officer Mark Russinovich. As outlined in a Wired magazine interview earlier this year, Russinovich isn’t afraid to criticize his employer, and can be refreshingly frank and direct about Microsoft’s failings. In an interview I had with Russinovich at TechEd 2014, he said that the Microsoft was now operating in a “very competitive world” and Microsoft was behind in several areas. Here’s a larger excerpt from that interview:

“So we’ve got to recognize that. Even in the cloud we’re behind in certain ways too. This is a place that’s moving so fast and we’ve seen how just taking your eye off something for a few years could be the end of it. The difference between being a player and being a distant, inconsequential part of a market — as we are in some of these areas — [is that] we can’t take things for granted, or we can’t take our customers for granted. At Azure we definitely believe this.”

A few weeks before Microsoft TechEd Europe 2014 – where Russinovich took the stage to demo new Azure services like the Azure Batch Service, the Docker Client for Windows, and Azure Premium Storage – I had the opportunity to conduct a wide-ranging phone interview with Mark where we discussed the state of IT, the future of Microsoft Azure, and a look back at how far IT has come in the last decade. What follows are portions of our phone interview, edited for space and clarity.

Recent Improvements to Microsoft Azure

Jeff James: We last spoke at Microsoft TechEd 2014 in Houston, and there were several new features for Microsoft Azure announced at the time. Azure has seen some additional improvements and new features since then, so perhaps you could talk a bit about some of the updates to Azure since then?

Mark Russinovich: One thing that’s top of mind is we released our Microsoft Azure D-series of VMs recently. These VMs offer faster CPU, more RAM, and local SSDs, which we haven’t had before. (Editor’s Note: Microsoft also announced the massive Azure G-series VMs — the largest ever offered — just after this interview was conducted.)

That unlocks a bunch of different scenarios. One of the things that D-series VMs work nicely with is SQL server, which has a feature called buffer pool extension which allows you to pick a buffer pool at a local storage device. In this case, it would be the SSD. It’s essentially a second tier of high-speed cache behind RAM for SQL.

At Microsoft TechEd 2014, there was a lot of news about the rebranding of Hyper V Replica to Azure Site Recovery and the support for DR, not just using Azure as the orchestrator but actually to Azure or back from on premises.

Since then we acquired a company called InMage which does VM replication. It works on basically any VM, whether it’s Amazon or on a VMware VM, to replicate data. We think this is the great tool for our customers that want to migrate from, example, VMWare or Amazon to Azure because they can do it in real time and minimize the downtime through the migration.

Jeff: That leads to my next question: With all of these cloud services, the way Microsoft is releasing updates to their products and services has radically changed. Years ago it used to be a product like Windows Server 2008 would come out as a discrete SKU, followed by a service patch (or two) down the road. But with Azure and some of these other new cloud services, the updates are ongoing, a constant product iteration. Maybe you could talk a little bit about how Microsoft’s approach to developing and shipping products has changed?

Mark: This is part of the transformation of the way Microsoft produces software, going from a boxed product company to a service company. When I hear about companies that are saying, “Oh, we’ve got the cloud now. We’re going to start working on the cloud.” I won’t name any names, but some of the largest companies in IT that are delivering IT infrastructure are suddenly saying “We’ve got the cloud.”

I know firsthand from being at Microsoft though this transition from a boxed product mentality to a service mentality, you can say it but it really requires a massive cultural change and engineering systems change to support the constant delivery that we’re doing now.

It takes many years. This is coming from a company that had experience in cloud services or service delivery through things like Hotmail and Xbox Live and Bing for many years.

But even getting to the point where we’re releasing a public platform and products on top of that that are aimed at IT, it was still a transformation, and we’re still [going through that transformation]. We’re still not perfect yet.

There’s lots of room for us to improv, but the boxed product delivery model is one where there’s a big cost for customers to absorb a new release because they’re responsible, ultimately, for deploying and upgrading their servers, testing for compatibility, and it’s therefore disruptive to them.

Their tolerance or willingness to accept new software is weighed against the benefits they’re going to get out of taking that new software. One factor behind this multiyear cycle between big releases is customers not wanting to go through this disruption on a frequent basis because you’re going to be delivering incremental improvements on a frequent basis. That’s not worth the cost of going and disrupting your whole infrastructure and doing all [of your own] testing.

What that leads to is the cost of a bug becomes very high because if you deliver a bug as part of that software and then deliver a fix shortly after you’re causing another wave of disruption through the system. Patching is a very expensive process.

These things combine to create this development process which starts with the team looking at what features they’re going to produce in the next release, spending some time on that, typically months. Coming up with a plan. Starting the engineering work. Going through these milestones with internal integration tests in-between milestones.

Next we get to a point where it’s somewhat stable. Releasing that as a preview or a beta to the outside world. Then when they’re close to the final release, they’ve got all the functionality in, then there’s typically a longer beta where you want to ensure that enough of your customers have played with the product in as close to production like environments to ensure that you’re not releasing those bugs as part of that product.

At the same time, you’re going through lots of testing internally. Then once you get at that level of confidence you [release it out to the world.] That’s a two to three year process that Microsoft and many other boxed product companies have traditionally gone through.

With delivering software as a service, we’re the ones taking the disruption when it comes to the updates, not the customer. We can create a VM system that can produce the software, test the software, and release it in an agile manner.

One of the best things we get out of software delivered as a service is the ability to test in production where we can do, of course, our own internal testing on our test clusters and with our own first party workloads. Once we’re ready and have confidence in the release, we start to push it out to production but we do it in a very careful way where what we’ll do is push it out in what we call slices.

We’ll do a first slice in production to just a small subset of the total capacity. See how that goes. That’s basically evaluating the software with real customer production workload hitting it.

If that does well then we’ll roll it out to a larger slice and then continue to accelerate until we’ve got full coverage.

The cost of a bug fix is actually much lower, too, because our engineering systems are designed to detect a problem quickly and then for our developers to be able to fix the problem and then push out that fix to production very quickly in the order of a day, typically, or even hours in some cases.

The whole model changes. It requires a cultural shift in the fact that now it’s not sustained engineering that is dealing with bug fixes while the product team is off figuring out what to do in the next release. It’s actually the developers of the product that are essentially operating. This is the whole DevOps model that everybody’s talking about and going towards.

The developers have the field production because they’re the ones…Basically, developers are the company that’s operating the software. If there’s a bug fix we need to fix it and we need to feel the pain of it directly so we can feel the urgency of fixing it, understand the problem, and roll out a fix for it.