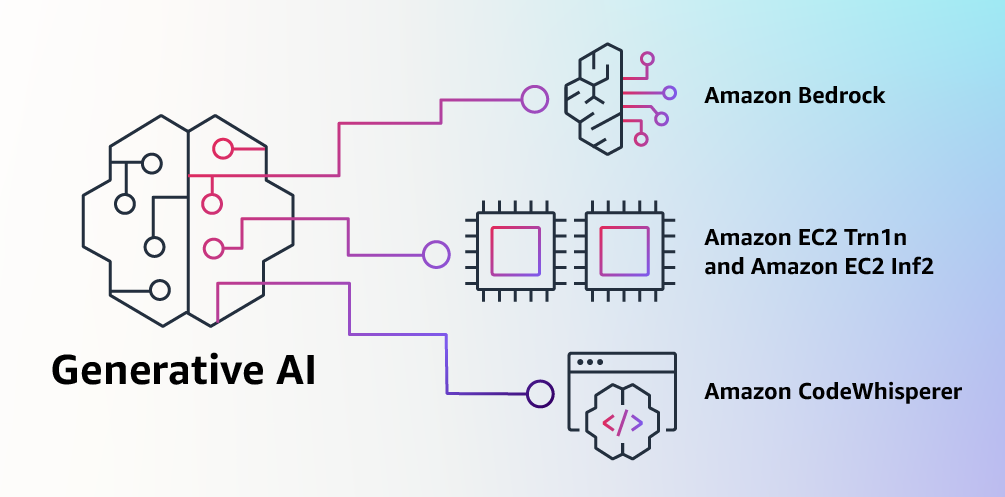

Amazon Bedrock Brings Generative AI Capabilities to AWS

Amazon announced yesterday Amazon Bedrock, a new platform allowing organizations to build and scale generative AI applications in the likes of OpenAI’s ChatGPT. Despite being the leading cloud provider with Amazon Web Services, the company was a bit late to join the generative AI race but it’s well positioned to catch up.

“At AWS, we have played a key role in democratizing ML and making it accessible to anyone who wants to use it, including more than 100,000 customers of all sizes and industries. AWS has the broadest and deepest portfolio of AI and ML services at all three layers of the stack,” emphasized Swami Sivasubramanian, VP, Database, Analytics and ML at AWS.

Amazon Bedrock lets companies build and scale generative AI applications

With Bedrock, Amazon isn’t interested in building its own version of ChatGPT. Instead, the company will allow other companies to build generative AI apps using foundation models (FMs) from AI startups including AI21 Labs, Anthropic, and Stability AI, as well as Amazon’s own Titan FMs.

Amazon Bedrock is currently available in limited preview, but the company claims that it will be “the easiest way to build and scale generative AI applications with FMs” due to the variety of foundation models available to developers. As an example, Amazon’s two Titan models are optimized for summarization, text generation, and detecting harmful content in data, but other models from AI partners are optimized for other things including text processing tasks and image generation.

For developers using AWS to train ultra-large machine learning models, the company also announced the general availability of Amazon EC2 Inf2 instances powered by AWS Inferentia2 chips, which add a cost-effective solution for running generative AI workloads. New EC2 Trn1n instances powered by AWS Trainium chips are also available to train generative AI models faster.

Amazon’s CodeWhisperer AI coding companion is now free to use

Amazon also announced yesterday that CodeWhisperer, the company’s AI-powered code-generating service tool was now available free of charge for developers. CodeWhisperer supports Python, Java, JavaScript, TypeScript, C#, and many other languages, and it can be accessed within the AWS Lambda Console, Visual Studio Code, and other IDEs.

You can sign up to use CodeWhisperer for free on the official product page. A CodeWhisperer Professional Tier is also available with more administration features and higher limits on security scanning.