This article will discuss new Hyper-V features and their impact in the Windows Server 2016 (WS2016) Technical Preview 5 (TP5), which is available to download now.

Windows Server 2016 Technical Preview 5 New Features

Microsoft recently released the latest public preview of Windows Server 2016 and Hyper-V Server 2016 (the free version). There are lots of new Hyper-V features to evaluate, learn, and use.

If I had to give you a theme to this release, it would be cloud. Much of what is in the 2016 release is geared toward building private, hosted, or public cloud, with a lot of the management being offered either by Azure or Microsoft Azure Stack. When evaluating WS2016, you’ll need to consider:

- Nano Server, with administration via Remote Server Management Tools

- Hyper-V

- Failover Clustering

- Storage

- Networking, particularly the Network Controller

- Microsoft Azure Stack

- Containers

In this post, I’m going to focus on the improvements to Hyper-V.

Connected Standby

This is a feature for Windows 10 users and the few presenters that run Windows Server Hyper-V on their laptop or hybrid device. You might even say that this new feature was dedicated to Paul Thurrott when the feature was announced at TechEd Europe 2014, mainly because Paul was one of the more vocal sufferers of the lack of compatibility between Hyper-V and the heralded Windows 8/hardware feature. Hyper-V had issues with Connected Standby, and these issues have been solved in Windows 10 and Windows Server 2016.

Discrete Device Assignment (DDA)

Discrete Device Assignment is a relatively new feature in the list of improvements. DDA allows you to give a virtual machine direct and exclusive access to some PCI devices in the host. The concept is that a virtual machine can talk directly to a graphics card. My suspicion is that the primary customer for this feature are those who are using Microsoft Azure with an N-Series virtual machine. But anyone looking for something better than RemoteFX or compute intensive workloads should be interested in this feature, too.

Host Resource Protection

This is a security feature to protect a host and other virtual machines from resource abuse by another virtual machine. Imagine that a virtual machine goes awry or is compromised; in the latter case, the attacker will want to attack the hypervisor, either looking for a vulnerability or to launch a DOS attack. Hyper-V can be configured to starve the virtual machine of resources when unusual behaviour starts, thus limiting the damage and giving you a chance to intervene.

Add-Add/Remove of Virtual Memory and Network Adapters

Is that applause and cheering that I hear? This heavily demanded feature is coming to Hyper-V; you can add and remove memory and virtual NICs to and from running virtual machines running Windows 10 or WS2016.

Hyper-V Manager

The old tool is looking long in the tooth, but improvements are coming. You can launch Hyper-V manager with alternate credentials, supporting the secure dual-identity approach that some companies enforce. You can also manage older versions of Hyper-V from WS2016 (WS2012, WS2012 R2, Windows 8.1, and Windows 8). The management protocol has been updated to use WS-MAN (TCP 80) with remote hosts. A benefit is that you can connect to a remote host with CredSSP and perform a live migration without enabled messy Active Directory constrained delegation.

Integration Services via Windows Update

Another cheering moment here; the Hyper-V integration components in the guest OS will be updated via Windows Update; Linux uses different methods. Note that the VMGUEST.ISO is no longer required, so it is not included in the installation.

Linux Secure Boot

You can protect Linux guests from root kits by enabling secure boot on Generation 2 virtual machines. You must use a compliant version of Linux and enable the use of the Microsoft UEFI Certificate Authority.

Nested Virtualization

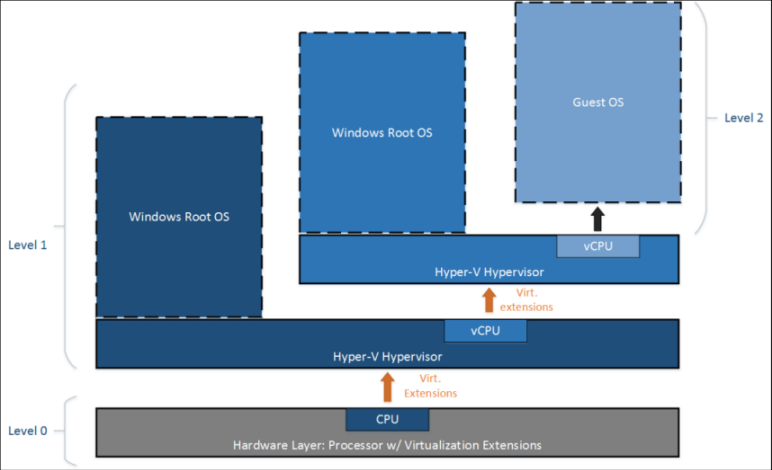

Here’s a very big cheering moment because we finally get a feature we’ve been asking for since Windows Server 2008. Those with constrained hardware can finally run Hyper-V virtual machines inside of Hyper-V virtual machines — there are blog posts on how to get vSphere running inside of Hyper-V!

You can even run Hyper-V virtual machines inside of Hyper-V virtual machines, insider of Hyper-V virtual machines, inside of Hyper-V virtual machines, and on and on. This is great for training classes and presenters (failover clustering and Live Migration on a single physical machine), but the real winner here is Windows Server Containers, which gets the more secure Hyper-V Container.

I can’t wait until the day that I can run Hyper-V inside of an Azure virtual machine! The performance of nested Hyper-V should be pretty good, thanks to Microsoft’s micro-kernalized architecture, as opposed to the alternative monolithic approach.

Networking

This is a huge area that deserves its own article, so watch out for a post that’s coming soon.

Production Checkpoints

Checkpoints, what some of you still call Hyper-V snapshots, were improved to the point where Microsoft can say “yes, use them with production workloads.” A production checkpoint uses the backup infrastructure of Hyper-V to create a checkpoint of a running virtual machine. When you restore the checkpoint, it’s as if the virtual machine is being restored from a backup. You can use legacy standard checkpoints, but production checkpoints are on by default. This improvement is a recognition that:

- People were using checkpoints in production, even though it wasn’t recommended.

- The nature of systems management has changed, and self-service administrators are going to use checkpoints.

Shielded Virtual Machines

This is actually a huge topic; Microsoft’s core hypervisor team did a lot of work to:

- Harden the hypervisor.

- Create a new model and architecture (Host Guardian Server) for creating trusted virtual machines with limited or no access for hypervisor administrators.

- Enabling tenant-managed BitLocker with a virtual TPM chip.

Yes, you can replicate these virtual machines to hosts that are similarly secured in the secondary location using Hyper-V Replica.

Virtual Machine Configuration

The old XML files of the past that were used to describe virtual machine metadata are gone. WS2016 will use binary files that you cannot edit:

- .VMCX: The virtual machine configuration file.

- .VMRS: The virtual machine runtime state data file.

The benefits of this change are:

- Improved performance on larger and denser hosts.

- Reduced risk of data corruption caused by storage failure.

This change has proven to be controversial. I don’t get why; it was completely unsupported and unsafe to directly edit the XML files in the past.

Virtual Machine Configuration Version

Most of us did not know that virtual machines have always had versions, based on the Hyper-V version that they were created and running on. You will be able to update the version of a virtual machine in WS2016, which will be useful thanks to some of the improvements in failover clustering.

PowerShell Direct

I’m not a day-to-day administrator, but I use PowerShell a lot when doing presentations and demos of Hyper-V. I’ve done lots of PowerShell remoting, which can get quite complicated. The same scenario exists for a virtualization admin; they have lots of machines they want to work with, but they want PowerShell admin inside of the machines to be easier.

PowerShell Direct allows you to do work inside of a virtual machine, from the host, without any network or firewall access to the guest OS. For example, if a virtual machine loses an IP configuration, you can reconfigure the network stack using PowerShell Direct to get the machine back to an operational status.