I have been working with the current release of Hyper-V since the first preview for Windows Server 2012 R2 was released. My job is unusual because I don’t work with production systems very often. Instead, I spend most of my time diving deep into technology features, experimenting, learning, and then evangelizing or teaching possible solutions to Microsoft partners.

In my line of work, I inevitably come across Hyper-V features and functionality that I would like to see improved (or added) to the next version of Hyper-V. I also get feedback from customers who are at the coalface every day. As a result, I’ve built up a list of things that I would like to see in the next version of Hyper-V. Some of these are Hyper-V features, some are Windows features that affect Hyper-V, and others are bolt-on solutions.

Is there anything not on this list that you’d like to see added or changed? If so, why don’t you post below?

Although it’s too late to affect the vNext release of Windows Server, we might be able to impact the next release.

A New Hyper-V Management Console

We have been using two MMC utilities to manage Hyper-V since the release of Windows Server 2008. The Hyper-V Manager is used to directly manage host configurations, and it is also used to manage virtual machines on non-clustered hosts. The Failover Cluster Manager is used to manage virtual machines on clustered hosts and, it’s obviously used to manage host clusters. I work with Hyper-V quite a bit so I understand the clear divide, but most people honestly just don’t get it, despite Microsoft making some changes in Windows Server 2012 that guides you to the correct place to work.

The official Microsoft answer for a central place to manage hosts is to use System Center. The System Center Virtual Machine Manager (SCVMM) used to be Microsoft’s answer to vSphere’s vCenter. But Microsoft left virtualization management behind with the release of System Center 2012. SCVMM is a bad name for the product now, as the tool is more of a cloud fabric management solution now, where the virtualization element is only a small piece of the puzzle. And to this day, those of us who like full control are still returning to Hyper-V Manager and Failover Cluster Manager despite the growth of SCVMM to get our Hyper-V, clustering, and storage work done.

And there is one other issue with SCVMM: Since the licensing changes of System Center 2012, small to medium enterprises can no longer afford SCVMM. So these businesses that have between one and five hosts don’t have a good centralized management solution.

As a result, I have a request for Microsoft. Continue to develop SCVMM because the target market for that solution is public and private cloud deployment and infrastructure management. But for the other 90 percent of customers (that only an ivory tower resident thinks will move completely to the cloud), why not give them a new Hyper-V management solution that is made up of one console and one set of PowerShell cmdlets? Give us all the elements for setting up Hyper-V hosts and clusters under one roof.

I know that SMEs will appreciate a new central console that is a part of Windows Server. I believe that large hosting companies that go with Hyper-V but opt for OpenStack or other cloud solutions would like this. And to be honest, I think it would simplify development for Microsoft by enabling easier integration from Microsoft cloud-based services.

Storage Spaces Management

I am a huge fan of Storage Spaces and the Scale-Out File Server architecture. Storage Spaces is still relatively new with Windows Server 2012 R2, but some elements of the solutions are still a little crude. Some of these more difficult tasks include updating disk BIOs, replacing faulty disks, and alerting.

Storage Spaces administration needs more work, and I do not want to hear the advice to “use System Center” because the person uttering that sentence clearly wants to sell some licensing. We need an administrative center for Storage Spaces, with clear health and performance information that we would expect to see in a SAN administration utility. Functions in this console should also include the ability to manage disk BIOS: This directly impacts performance and stability and an option for sending warning and critical alerts to an email address.

The other element that must change are the repair and recovery processes. There should be no human involvement in performing replacement or repairs other than extracting and replacing a disk. The idea of a Scale-Out File Server is that we can have many terabytes or even petabytes of storage. That’s lots of disks, and you can expect lots of failures when you scale out. Do we really want our low paid and inexperienced ‘disk monkey’ to be running PowerShell cmdlets on a daily basis?

Virtual Machine Change Synchronization in Hyper-V Replica

One of the most popular features, and deservedly so, of Hyper-V is Hyper-V Replica (HVR), a built-in asynchronous replication system for virtual machines. Some key scenarios for HVR include:

- Large enterprise: Replicating hundreds or thousands of virtual machines to a dedicated disaster recovery (DR) site.

- Disaster Recovery-as-a-Service (DRaaS): A hosted solution that offers virtual DR in the cloud for SMEs that cannot otherwise afford DR.

There is a flaw in HVR. Say you configure a virtual machine, put it in production and start to replicate it. You monitor the virtual machine and see that you need to increase Maximum RAM. You do that in the production site but HVR does not replicate that change to the DR site. That’s not a big deal? Wrong. What if you have thousands of virtual machines in a private cloud, replicating away? I bet it’s a big deal to manually change those virtual machines. And I guarantee it would be a mess in the SME DRaaS scenario, either with it not being done (assumption is the mother of chaos) or it would create a hailstorm of hell-desk tickets.

I want Hyper-V Replica to synchronize virtual machine changes to the secondary site. This would resolve the above issues and bring HVR further along in terms of maturity.

Hyper-V Replica Branch Cache

Two of the core scenarios when HVR was introduced was replicating branch office virtual machines to a central data center or introducing DR to SMEs. Both of these sites have a common obstacle to DR replication: bandwidth.

My idea is that a proxy would operate in front of the secondary site hosts and offer an optimization or deduplication service, much in the way that the expensive Riverbed appliances offer. Before any block of data is sent over the wire from the primary site, a check is done to see if that block already exists in the secondary site. If it does, then the block is simply copied in the secondary site and less data traverses the latent, bandwidth-limited network. Microsoft already has a solution that does this kind of work: BranchCache. Maybe there’s a way that BranchCache can be used to work with Hyper-V Replica and could save us a lot of bandwidth headaches?

Upgraded Shared VHDX

A feature that got a lot of nods of approval when introduced was Shared VHDX. We can use Shared VHDX to create guest clusters without crossing the boundary between virtual machine and the fabric. Unfortunately, Microsoft didn’t have time to give us a complete VHDX solution before the release of Windows Server 2012 R2. So that means we cannot backup, replicate or live migrate very valuable Shared VHDX files.

What I would like to see, in this order is the ability to do the following with Shared VHDX:

- Backup from the host

- Replicate

- Perform Storage Live Migration

Hyper-V Clusters Rolling Upgrades

Since the days of Wolfpack, we haven’t had the ability to upgrade the members of a Windows Server cluster. This makes upgrading a Hyper-V cluster a slow, cumbersome, and possibly even an expensive process.

Windows Server has changed development cycles substantially. It is believed that sprint development for Azure is driving the feature development of Windows Server. We have and will see releases 12 to 18 months apart, instead of every three years. Sprint development might even lead to out-of-band releases in the future. So that leads to me wonder, can we continue to perform lengthy or outage-inducing migration projects as frequently as Microsoft is releasing changes?

My biggest request for the failover cluster group is the ability to perform an in-place or rolling upgrade of a Hyper-V Cluster. This might be possible by duplicating the Active Directory’s versioning. In other words, limiting Version A+1 hosts to functionality in Version A until all hosts are running A+1, and there is a sign-off to complete the upgrade by switching the version. This would facilitate small and large customers alike in upgrading their hosts and availing on-premises and hybrid cloud solutions as quickly as possible – and that’s a win-win for customers and Microsoft.

vRSS for Management OS vNICs

We can use virtual NICs in the management OS to converge networks or fabrics. For example, a host with 2 x 10 GbE or faster NICs would team those NICs and split the bandwidth into different networks.

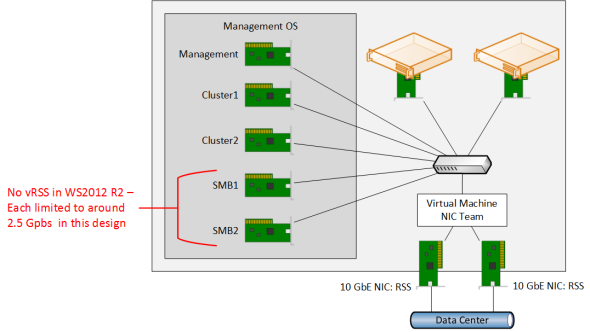

A commonly employed design sees hosts having just two physical NICs and all traffic, including SMB 3.0, traversing the converged networks implementation. There’s one problem with this: Without vRSS, we have seen that management OS virtual NICs can only reach around 2.5 Gbps over 20 Gbps of physical networking. This is because the virtual NICs do not support vRSS.

The lack of vRSS in the management OS constricts bandwidth utilization

(Image: Aidan Finn)

Microsoft added vRSS to Windows Server 2012 R2 Hyper-V, but it is limited to virtual machines. Because of this, Microsoft has suggested having four, six, or even more virtual NICs in the management OS for SMB 3.0 traffic. This really complicates the design. I would like to see vRSS being enabled in the management OS so we can go back to a simpler design.

RDMA for Virtual NICs

If we’re going to push huge amounts of traffic through virtual NICs in the management OS or in virtual machines, then it would be good to have virtual RDMA (vRDMA). We can reduce latency, but we can also reduce the impact on host processors.

Component Upgrade Integration Enhancements

Every version of Hyper-V comes with a new version of the Hyper-V integration components (ICs). Microsoft expects you to upgrade these components in your virtual machines’ guest OS to improve stability and performance, as well as gaining access to new Hyper-V features.

If only Hyper-V ICs were released every 12-18 months, and even then that’s a pain! From time to time, an update to Hyper-V upgrades the host’s copy of the integration components. As a result, we have to perform yet another upgrade.

In an ideal world, there would be no guest reboot for a new version of the Hyper-V ICs. However, Microsoft has been promising us fewer reboots since I first started working with Windows NT 3.51, without any improvement.

What I want is a better installation process. Maybe the little-known out-of-band file copy feature could be leveraged to perform a copy and install of the MSI file. This would be another step toward improving the upgrade process of Hyper-V, especially in these era of frequent upgrades. The gain here is less time spent on upgrade projects and hopefully less impact on customer uptime.

Azure Site Recovery without a VMM Requirement

When I presented on HVR in the past, one of the guaranteed questions from the SME market was, “Will Microsoft give us the ability to replicate to Azure?” Microsoft partners and customers alike wanted a cheap way to implement disaster recovery. Eventually Hyper-V Recovery Manager (HRM) was released as an orchestration solution between production and DR clouds, both managed by SCVMM. The requirement of SCVMM made HRM a solution just for medium to large businesses. Unfortunately, 90 percent of companies out there were left in the cold.

Microsoft then upgraded the Azure-based HRM to become Azure Site Recovery. The orchestration functionality was joined by the ability to replicate virtual machines into Azure – Microsoft was selling DRaaS, exactly what the SME market wanted. Or was it? The answer is: no, and that’s because ASR still requires you to run SCVMM in the production site to replicate into Azure.

There is a huge customer base out there that would love to replicate their workloads into Azure, but they can’t. Microsoft also tells hosting companies to sell DRaaS based on their own public clouds using ASR, but the same requirements and market size limitations exist. If Microsoft removed the ASR requirement for SCVMM, then I think ASR would become the hot on ramp into the hybrid cloud that Microsoft strategists dream of.

What do you want in a future version of Hyper-V?

Leave a comment below to tell us what you think of my ideas. Do you agree or disagree? Is there something else you want added or changed in Hyper-V?