Over the past few weeks, we have discussed using physical disks for Hyper-V storage and virtual hard disks with virtual machines. In this post, we will look at what to consider when configuring a virtual machine’s storage.

Virtual Machine Storage Hardware

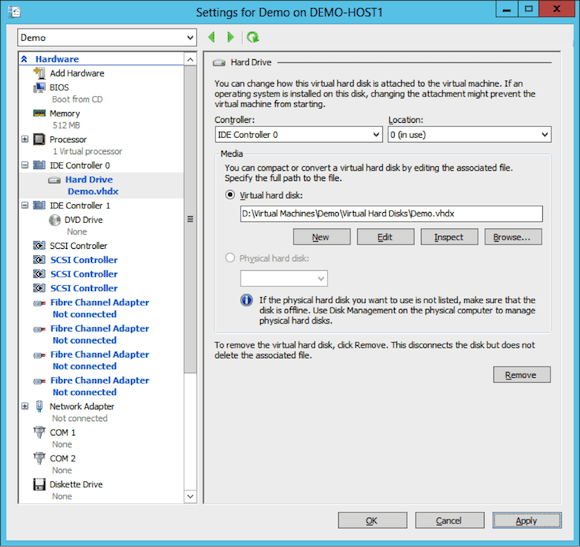

We attach disks (physical or virtual) to a virtual machine using virtual storage controller devices in a virtual machine. There are three kinds in the hardware settings of the virtual machine:

- IDE: two controllers by default, with two locations, allowing up to four disks, including the virtual DVD drive.

- SCSI: one controller by default. A virtual machine can have four SCSI controllers, each with 64 locations (or virtual hard disks).

- Fiber Channel Adapter: Up to four virtual fiber channel adapters, each having unique Worldwide Names (WWNS) to identify themselves (using NPIV enabled host bus adapters) on a fiber channel SAN and connect to appropriately zoned LUNs.

IDE Controllers

Those who are new to Hyper-V, or those who work in marketing for Microsoft competitors, often focus on the presence of IDE controllers in a Hyper-V virtual machine. It makes no difference that they are “IDE” controllers; in fact, they are not actually IDE controllers – they are software. Senior Hyper-V Program Manager Ben Armstrong, explained on his blog why it does not matter that Hyper-V virtual machines use IDE controllers.

The IDE controller is normally used for two things:

- A Hyper-V virtual machine’s boot volume must be on Location 0 on IDE Controller 0.

- Virtual DVD drives can only be added to an IDE controller. There is one by default on Location 0 of IDE Controller 1. Do not remove this device, as it is used to mount ISO images such as SQL Server or Hyper-V Integration Services updates (via the Hyper-V Manager console).

As with the SCSI controller, the IDE controller can connect to pass-through disks or virtual hard disks.

Usually, the IDE controllers are not used for anything else. IDE controllers do not support hot add/remove of virtual hard disks. Some organizations choose to place the paging file of the virtual machine’s guest OS into a dedicated virtual hard disk. Attaching this paging file virtual hard disk to an IDE controller will guarantee that it cannot be accidentally removed while the virtual machine is running. The virtual hard disk cannot be hot-unplugged and the virtual hard disk file is locked.

SCSI Controllers

The SCSI controllers of a VM support hot add-remove of virtual hard disks. This makes them very flexible. The SCSI controllers, like with IDE, are software, and have no reliance on the presence of actual SCSI controllers in the host.

VHDX files that are attached to SCSI controllers also support TRIM on Windows Server 2012; this can reduced wasted space if the virtual hard disks are placed on TRIM-supporting storage.

Fiber Channel Adapters

Unlike IDE and SCSI, virtual fiber channel adapters can only connect to physical disks, which happen to be zone to the WWN’s of the virtual machine. This means that you lose the flexibility of virtual storage, but it does offer other benefits such as shared storage and disks that scale beyond 64 TB.

iSCSI Disks

There are no dedicated virtual iSCSI adapters in Windows Server 2012 Hyper-V, which means a Hyper-V virtual machine cannot boot from an iSCSI disk. However, a virtual machine can use ordinary virtual network adapters that are bound to the VLANs of the SAN to connect to iSCSI disks. This offers the same benefits as Fiber Channel Adapters.

SMB 3.0 Storage

Windows Server 2012 does support using SMB 3.0 for storing application data, including SQL Server 2008 r2 (and later) and IIS 8.0. Maybe you don’t need to allocate storage to a virtual machine? Maybe you can configure the guest OS to use a shared folder on a Windows Server 2012 file server or Scale-Out File Server (for scalable and continuously available shares). This will simplify the storage of the virtual machine, but it does require more virtual network adapters to be used. Note that while you can have SMB Multichannel over multiple virtual network adapters, you won’t have SMB Direct (RDMA) functionality in the virtual machine.

Virtual Machine Storage Scenarios

Now we understand the various ways we can attach storage to a virtual machine, but how will we plan the attachment of that storage? Let’s take a look at some of the ways.

Virtual Hard Disks Vs. Physical Hard Disks: The primary reasons that businesses like virtualization is that it enables self service and creates flexibility. Physical disks prevent both of these benefits. Therefore you should assume that virtual hard disks are the default unless otherwise specified.

Basic Virtual Machines

As with all Windows Server 2012 virtual machines, the OS will be in a disk attached to Location 0 of IDE controller 0. This is in all scenarios, but the rarest, going to be a virtual hard disk. This offers the possibility of using templates that are deployed from a library and the use of differential virtual hard disks for labs and pooled VDI.

Any time a volume is required for an application or data, a new virtual hard disk is attached to a SCSI controller. The best practice is to have one volume per virtual hard disk. This is flexible because it allows easy resizing of volumes (you should resize the virtual hard disk first, and then resize the volume).

Extreme Throughput Virtual Machine

Each virtual machine gets one storage channel per SCSI controller and per 16 virtual processors. That means if you want more storage throughput:

- Balance virtual hard disks across SCSI controllers in the virtual machine

- Add more virtual processors to the virtual machine

Note: Do not over-allocate virtual processors to virtual machines. Logical processors on the host are occupied by a virtual machine that is active, even if there is not work being allocated to the virtual processors by the guest OS. This is an extreme scenario.

Guest Cluster Virtual Machine

You can create clusters using virtual machines. Windows Server 2012 supports guest clusters with up to 64 virtual machines. Any shared storage that the guest cluster requires can be provided by the following:

- File Shares: SMB 3.0 shared folders if your clustered service supports it.

- Fiber Channel: Physical disks connected by Fiber Channel Adapters.

- iSCSI: Physical disks connected by an iSCSI initiator in the guest OS of the virtual machines.

You cannot use shared virtual hard disks in Windows Server 2012 Hyper-V. That means you must either use SMB 3.0 storage or physical disks on a SAN (or simulator) to create a guest cluster.

Hot Expandable Storage

While we can hot add and remove disks to a SCSI controller in Windows Server 2012 Hyper-V, we cannot hot-resize a virtual hard disk. This is a concern for a very small audience. Most administrators will size their virtual machines in advance and size virtual hard disks accordingly. Those who are concerned with the cost of idle storage space will consider using Dynamically Expanding virtual hard disks for application partitions that are attached to a SCSI controller.

On the other hand, there are those who want complete control, clinging to server administration techniques of the past. They must opt for physical disks, such as a passthrough disk or SAN disk, or an SMB 3.0 share on a physical disk. A physical LUN can be resized as required using a storage administration tool without interference to the virtual machine.

Volumes Greater than 64 TB

VHDX will be the default format virtual hard disk, scaling out to 64 TB. In the very rare situation where a business requires volumes greater than 64 TB, you will need to use physical disks or SMB 3.0 file shares (that are stored on physical disks).

Note: Please don’t try to be clever by using Storage Spaces to aggregate 2040 GB VHD files to create a larger volume in a WS2012 virtual machine. Using Storage Spaces in the guest OS of a virtual machine is not supported.

Live Migration and Physical Disks

There are several scenarios to consider:

- Pass-through Disks: You can only perform Live Migration with a virtual machine that uses pass-through disks if the virtual machine is highly available (HA) on a Hyper-V cluster and the pass-through disk’s LUN is managed by the cluster. Other forms of Live Migration are not possible.

- Fiber Channel Adapter: You can use Live Migration of HA and non-HA virtual machines that are using virtual fiber channel adapters. The LUNs must be zoned for the A and B WWNs of each adapter, and the source and destination hosts must be configured with the same virtual SANs.

- iSCSI: This type of physical disk is completely abstracted because it is an IP connection that is initiated from within a virtual machine. The only limitation on Live Migration is that you must ensure that the guest OS will be able to communicate with the SAN on the destination host, otherwise the physical disk(s) will go offline.

- SMB 3.0: The same guidance applies to SMB 3.0 storage as with iSCSI disks.

Backup

One of the benefits of Hyper-V is that you can install an agent on hosts and backup (Windows) virtual machines with application consistency using the Hyper-V VSS Writer. This gives the same sort of result (backup lots of virtual machines with a single job) that a SAN snapshot might, but with greater orchestration and consistency. Note that most business-ready SANs offer a hardware VSS provider to integrate SAN snapshots with the Hyper-V host backup process (this might require additional licensing, depending on the OEM).

Use of physical disks requires that you install backup agents in a virtual machine’s guest OS to back up the physical volumes with application consistency.

Hyper-V Replica

Microsoft built Hyper-V Replica into Window Server 2012 Hyper-V to offer free, asynchronous, change-only, and compressed disaster recovery (DR) replication of virtual machines to a secondary site via HTTP/Kerberos or HTTPS/SSL communications/authentication. Hyper-V Replica will replicate virtual hard disks. Virtual machines with physical disks need not apply – those virtual machines will require alternative (and expensive) storage based replication systems.

Here’s a couple of bandwidth saving tips from Microsoft:

- Place the guest OS paging file in a dedicated virtual hard disk (maybe on location 0 of IDE controller 0).

- Opt not to replicate the paging file virtual hard disk.

This will eliminate bandwidth consumption by constantly changing paging files without compromising the replica VM. It will also complain during failover, but it will create a temporary paging file and get on with things.