With a lot of admins evaluating Hyper-V or encountering failover clustering for the first time, there appears to be a lot of confusion of what this Windows Server feature is used to accomplish. In this article I will explain what failover clustering is, and what role this Windows Server feature plays in enabling high availability (HA) in Hyper-V deployments.

Failover Clustering and High Availability (HA)

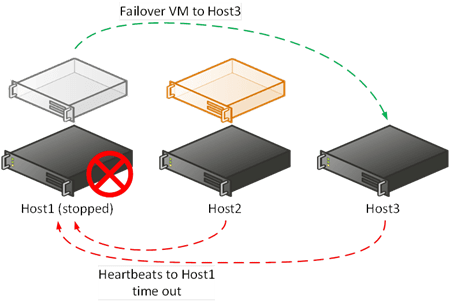

The purpose of failover clustering is to provide high availability (HA), which gives a server infrastructure the ability to automatically respond to machine failures. For example, say you have a virtual machine running on Host1. Host1 is just one of a number of nodes (members) of a failover cluster. It has a sudden and catastrophic failure, leading it to stop operating. Every second, each host in the cluster sends a test to every other node to ensure that they are still operational. A failure to respond to a sequence of tests indicates a node/host failure. The other nodes will detect that Host1 has failed within a few seconds because Host1 will not respond to a series of these heartbeat tests.

No manual intervention is required. The cluster will automatically failover (move and start) the resources that were on Host1. For example, the virtual machine that was running on Host1 might be relocated to Host3 and started up.

Host1 fails, the heartbeats time out, and a VM is failed over to Host3.

Failover clustering provides Hyper-V virtual machines with HA with the following traits.

- Automated failover: The cluster will detect host failure and automatically relocate/start the failed virtual machines that were on that host at the time of failure.

- Brief amount of downtime: Virtual machines that were running on the failed host stop running because the host is no longer operational. The cluster detects this failure in a matter of a few seconds (depends on the version of Windows Server and any customizations) and reacts. The downtime is limited to how long this detection will take, any ordering of failover that you implement, and the time it takes to boot the guest OS and start the contained services. This is probably less than a minute, and that is pretty incredible considering that a host just stopped.

- Reactive action: HA is not a planned operation; it is a contingency action for when a host fails.

High Availability Is Not Live Migration

Many IT pros, including those who have been using Hyper-V, confuse live migration and HA. They are two different technologies that serve two different purposes. HA is all about minimizing downtime that is caused by failure. Live migration is used to enable service mobility and flexibility, while have no perceivable service downtime:

- Manual (by default): Live migration is initiated by an administrator to move virtual machines from one host/cluster to another host/cluster. However, System Center can automate live migration to provide dynamic optimization (like vSphere DRS) or power optimization.

- No perceivable downtime: Although, like vMotion, there is a brief amount of downtime while a virtual machine’s state is copied to and started on the destination host, this is so short that it falls within the TCP timeout window so it does not impact perceived service availability (ICMP tools like Ping probably will notice). That means moving virtual machines via live migration doesn’t have an impact on 99.999 percent of services.

- Proactive action: HA responds to failure. Live migration pre-empts problems. For example, System Center can load balance virtual machines. System Center can find a better host for a resource hungry virtual machine before user experience is impacted. An administrator can drain a host of virtual machines if a hardware issue causes a warning in your monitoring system, allowing you to perform maintenance without impacting services.

Live migration was limited to within the hosts of a failover cluster in Windows Server 2008 R2. However, since Windows Server 2012, live migration has not required a cluster, nor is it limited to the scope of a cluster.

Do You Really Need a Hyper-V Cluster?

Everyone wants to maximize uptime. But even nonclustered or standalone hosts are very reliable (I’ve run hosts for years with only scheduled patching windows), and failover clustering comes with some costs (additional host capacity, supported shared storage such as a SAN, and additional networking). Are those costs worth it if the availability of VMs on non-clustered hosts is high, and you can still have live migration without failover clustering? This means that failover clustering is not for everyone.

Small and medium businesses might steer clear of clustering because of these costs but this isn’t a “big versus small deployment” split. Some of the biggest customers will be adverse to the costs of clustering too. For example, hosting companies (public clouds are often the biggest deployments) need to offer cost-competitive services. Investments in infrastructure must be returned by customer payment, and adding HA at the infrastructure layer increases the fees demanded of customers, making the service less attractive to maybe 80 percent of prospective tenants, at least in my experience. So hosting companies might deploy some clustered hosts but many more non-clustered hosts.

As for those small businesses, maybe Windows Server 2012 R2 Hyper-V with Hyper-V Replica configured to use 30 second asynchronous replication windows might be a more economical alternative to a cluster?

More to Come on Failover Clustering

I will be posting more on clustering in the Hyper-V world in the coming weeks, covering concepts like architecture, design, implementation, some of my personal best practices, and more.