How Do I Customize Microsoft Azure Routing?

In this article, I’ll explain how you can customize network routing for Azure virtual machines on, from, and to a virtual network.

How Routing Works by Default

In a normal deployment of virtual machines, Azure uses a number of system routes to direct network traffic between virtual machines, on-premises networks, and the Internet. The following situations are managed by these system routes:

- Traffic between VMs in the same subnet.

- Between VMs in different subnets in the same virtual network.

- Data flow from VMs to the Internet.

- Allowing virtual machines to communicate with each other via a Vnet-to-Vnet VPN.

- Enabling virtual machines to route to your on-premises network via a gateway (site-to-site VPN or ExpressRoute).

Every subnet in a virtual network is associated with a route table that enables the flow of data. This table can be comprised of three system route rules:

- Local Vnet Rule: Every subnet has this rule, which informs virtual machines that there is no hop (gateway) to machines in the same network.

- On-Premises Rule: A gateway enables connectivity to other networks outside of a virtual network, such as other virtual networks or the on-premises network(s). Use of local networks defines those networks; consider local networks as your method for defining this kind of rule.

- Internet Rule: All traffic that is destined for the Internet is managed by this rule by default.

The Need to Customize Routing

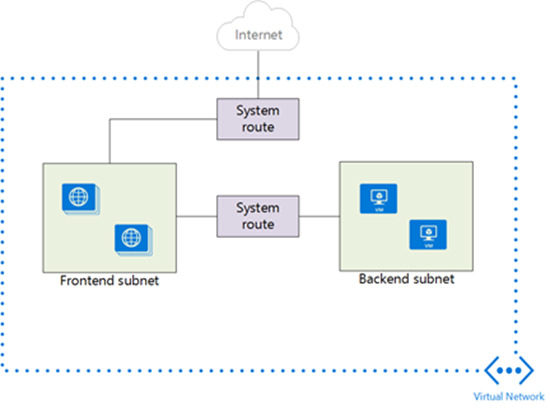

A lot of deployments never require routing customization, but there are scenarios where you might want to adjust the default flow of traffic. The following image depicts a simple design where a virtual network has two subnets. One of these subnets is the frontend, where web services will run in virtual machines. The second subnet is the backend, where more sensitive application and data services will run in virtual machines.

Those who have deployed or secured multi-tier web services will realize that there’s no added security with the following design. By default, all traffic can flow from the web servers in the frontend to the application and data services in the backend via the default local VNet system rule; there is no filtering.

One approach might be to enable filtering using Network Security Groups in the Azure fabric. However, Network Security Groups are just a classic, basic firewall port filtering system; there is no application layer inspection, filtering, load balancing, and so on, which we expect in a modern era design.

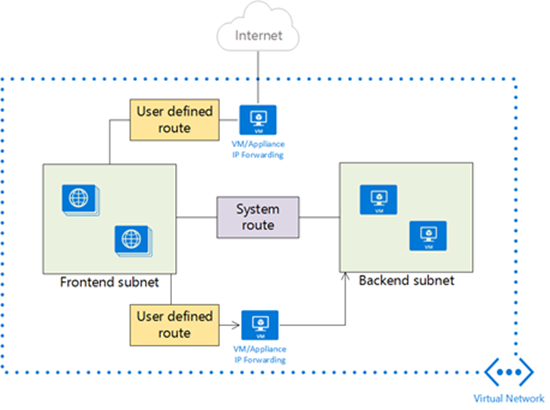

Azure does allow for the use of third-party network appliances, which are available via the Azure Marketplace. Some of these appliances are familiar brands and many are not. You can deploy an appliance on the virtual network, between the frontend and backend subnets using multiple virtual network cards, but this accomplishes nothing without overriding the system route and forcing traffic to route via the appliance.

The following diagram shows how a user defined route can be created in an Azure routing table to redirect traffic between the frontend and backend subnets via the appliance. Now the appliance can see all traffic and control it as required.

Note that you’d probably still use network security groups to enforce this routing using the Azure fabric.

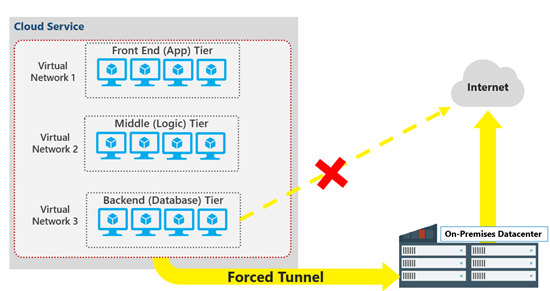

Another scenario is forced tunnelling, where you might allow the frontend subnet to route to the Internet as normal, but you require the backend subnet(s) to route via an on-premises network.

User Defined Routes

You can create a route table and associate it with a subnet in a virtual network. You can then create user defined routes based on three criteria:

- The destination CIDR: The address, such as 10.10.1.0/18, that represents the destination network that you want to manage routing to.

- Nexthop type: This instructs Azure what kind of device will be the first encountered router for this rule.

- Nexthop value: This is the IP address of the device specified in the Nexthop type.

Note that a route tabling can be associated with multiple virtual networks, but a virtual network can be associated with only one route table.

Once you add a route table to a subnet, routing is based on a combination of system routes and user defined routes. If you add ExpressRoute to the mix, then BGP routes will also be propagated to Azure. The following order is used to prioritise routes if more than one route is found for traffic:

- User-defined route

- BGP route (if ExpressRoute is used)

- System route

Azure makes routing pretty simple. Now if only Azure could end the decades old Cross-Atlantic debate on the correct pronunciations of route and routing (rowt and rowting in USA, and root and rooting in Europe).