In part 1 of our article series on how to deploy a non-clustered Hyper-V host, I discussed how to install the management operating system (OS), update drivers and firmware, and create a NIC team. In this article, I’ll show you how to enable the Hyper-V role, where you’ll also learn how to build a production-ready standalone Windows Server 2012 R2 Hyper-V host.

Enabling the Hyper-V Role

You’re probably thinking “Finally! He’s going to do the bit I want to see!” This step is quite unexciting and to be honest, I’ll configure very little in the wizard that Server Manager offers. Most guides will configure those settings.

The wizard gives you a very basic configuration. Because I like to configure the little details that make a big difference with my explicit control, I usually skip most of the screens in the wizard.

The first way to enable Hyper-V is to run the following PowerShell cmdlet, where the server is rebooted automatically:

Install-WindowsFeature Hyper-V –Restart

Alternatively, you can use Server Manager to enable Hyper-V using the wizard with the following steps:

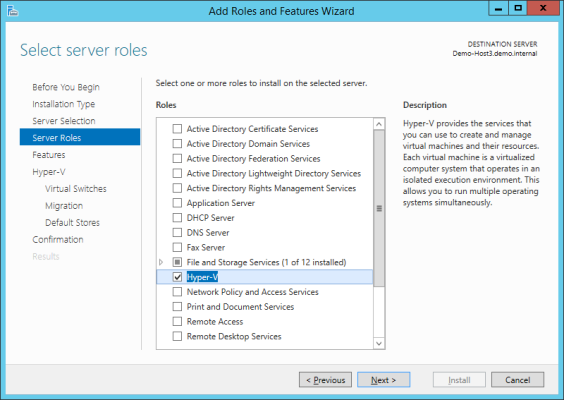

- Click Manage > Add Roles And Features to open the Add Roles And Features Wizard.

- Skip through the wizard screens, ensuring that your server is selected.

- Check the box to select Hyper-V in the Select server roles screen.

- A popup will appear asking you to confirm that it is OK to install the Hyper-V Remote Server Administration Tools.

- Click Add Features to confirm that this is OK. You will need these tools to manage Hyper-V locally.

Using Server Manager to Enable Windows Server 2012 R2 Hyper-V (Image: Aidan Finn)

Skip the following dialogs without selecting any options:

- Select Features: We do not need any further features.

- Create Virtual Switches: I will be using Quality of Service (QoS) to guarantee bandwidth to the management OS and the virtual machines so I want total control over creating a virtual switch.

- Virtual Machine Migration: More options are available in Hyper-V Manager once the host is prepared.

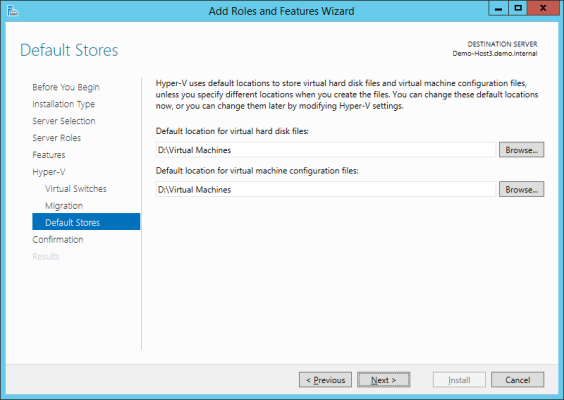

We will configure the default storage paths in the Default Stores screen. Change the paths to use the folder you created in part 1, that is, D:Virtual Machines. This means that any New Virtual Hard Disk or New Virtual Machine wizards will select this folder as the default location for creating new virtual machines or files. You don’t want to use the default location on the C drive!

Overriding the default Hyper-V storage paths while enabling Hyper-V (Image: Aidan Finn)

The final thing I will do in the wizard is check the box to Restart The Destination Server Automatically If Required. The computer will reboot twice, to enable Hyper-V and to boot up the original OS as a management OS. After this, you will have an active Hyper-V host.

Creating a Virtual Switch

In this example, we have a host with just 2 x 1 GbE NICs. Ideally, I would like to have four NICs, where two are used to create a team for the management OS, and the other two are used for a team to connect the virtual switch. If I can’t get more NICs or switch ports, then I will use just the two NICs to create a simple converged network design.

In part 1 of our article series on how to deploy a non-clustered Hyper-V host, I created a NIC team and noted the device name of the team interface. Now I’m going to create a virtual switch and connect it to that team. Instead of using the GUI, I’ll use PowerShell to create the virtual switch. The following cmdlet shows how to create the new virtual switch and connect it to the NIC team’s interface:

New-VMSwitch “DemoSwitch” –NetAdapterInterfaceDescription “Microsoft Network Adapter Multiplexor Driver” –AllowManagementOS 0 –MinimumBandwidthMode Weight

Note that:

- I have not allowed the management OS to share the virtual switch … yet.

- I have enabled weight-based, similar to a percentage system, QoS.

The next step is to enable QoS for virtual machines that will run on this host. I will guarantee them, as a whole, at least 50 percent of bandwidth (1 Gbps) for outbound traffic. This is done using a default bucket that is configured on the virtual switch.

Set-VMSwitch “DemoSwitch” –DefaultFlowMinimumBandwidthWeight 50

I could configure QoS on a per-virtual machine (actually it is per-virtual NIC) basis, but that would require more effort to manage. And odds are, I really don’t need to have that level of control.

Management OS Networking

Now, I have a means to connect the host to the physical network through the virtual switch. I’m going to share the virtual switch’s network connection with the management OS, but not by the means presented in the GUI.

Instead, I’ll manually create a management OS virtual NIC. And yes, you can create virtual NICs in the host. This virtual NIC will be connected to a port in the virtual switch and this will allow me to make better use of my limited number of physical NICs while retaining NIC teaming, along with the ability to apply QoS rules.

The first step is to create a virtual NIC. Here I create one called vEthernet (ManagementOS). You’ll see that I specify the ManagementOS bit of the name, and Windows creates the rest of the name to make it clear that this is a management OS virtual NIC.

Add-VMNetworkAdapter -ManagementOS -Name “ManagementOS” -SwitchName “DemoSwitch”

The second step is to apply QoS. I previously guaranteed at least 50 percent of bandwidth to virtual machines. I will do the same for the management OS. This means that if there is contention for bandwidth between the management OS and virtual machines, then there will be a 50/50 divide of the bandwidth. If there’s no contention, then either the management OS or the virtual machines can burst beyond the guarantee without impacting the other.

Set-VMNetworkAdapter -ManagementOS -Name “ManagementOS” -MinimumBandwidthWeight 50

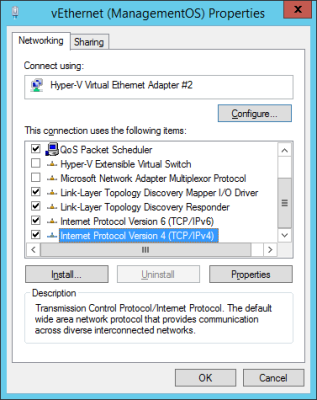

Now the management OS is ready for you to configure an IP configuration. Using either PowerShell or the GUI, you can configure the IP stack of the new virtual NIC in the management OS. All management of this host will be via this virtual NIC.

Editing the properties of the management OS virtual NIC (Image: Aidan Finn)

Up Next: Finalizing the Host and Deploying to Production

We don’t have a finished host yet! In the final part of this series, I’ll provide you with steps to finalize your host and get it ready for production usage.