In this post we are going to discuss the concept of converged networks in Windows Server 2012 and Windows Server 2012 R2, which can be particularly useful for Hyper-V deployments. We’ll also look into these converged networks introduce simpler, more economic and flexible network deployments.

What Are Converged Networks?

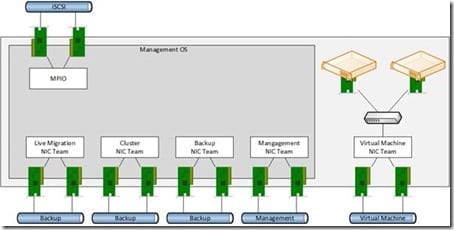

In a previous article, I discussed Hyper-V host networking requirements. Usually in Windows Server 2008 (W2008) or Windows Server 2008 R2 (W2008 R2), each network required a NIC or a NIC team. That increased costs, complexity, and was a cabling nightmare.

The networks of a W2008 R2 clustered Hyper-V host with iSCSI storage.

Customers may have come up with alternative ways to deploy these networks using hardware solutions. Using these tools you could take 2 * 10 GbE connections to the server and divide them up into 1 GbE connections. While this reduces the amount of cabling and complexity, it still presents a problem.

- Expensive: Some blade solutions require special switches that are incredibly expensive.

- Inflexible: You don’t have much option in how the bandwidth is divided up or used based on demand.

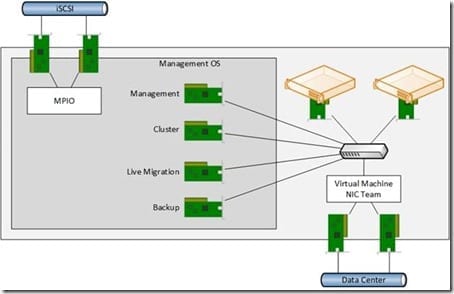

Windows Server 2012 introduced the ability to converge these many networks or fabrics (a cloud term) that are required by a server, such as Hyper-V, into fewer, higher-capacity networks and to divide up that bandwidth to serve the needs of the network requirements of that server. For example, the previously illustrated example could be deployed as follows:

A WS2012 Hyper-V host with converged networks and iSCSI storage.

Virtual NICs provide the connections required by the host’s Management OS for management, cluster communications, Live Migration, and backup. These are connected to the physical network via the virtual switch. The Management OS connections share bandwidth of a single high bandwidth NIC team (2 * 10 GbE NICs or lots of 1 GbE NICs) with the virtual machines.

This software solution is:

- Hardware agnostic: You can deploy Dell, HP, IBM, or whatever servers and deploy the same network configuration.

- Easy to deploy: Converged networks can be deployed using PowerShell or by using Logical Switches in System Center Virtual Machine Manager (VMM).

- Economic: You do not need any expensive networking appliances or server add-ons. Converged networks simply use the features that are built into WS2012 and later.

- Flexible: The old approach to W2008/R2 networking was restrictive. WS2012/R2 networking is very flexible and has many possibilities in terms of designs. Rather than being restricted to multiple 1 GbE networks, you can use QoS to guarantee minimum levels of bandwidth to either protocols or virtual network cards.

- Affordable 10 GbE or faster: Adding 10 GbE just for Live Migration or iWarp (or faster) for SMB 3.0 storage is often not a viable financial option. But introducing 2 * iWarp interfaces and associated switch ports per host might be more feasible if you are going to use fewer top-of-rack (TOR) switch ports in the data center for many of the Hyper-V networking requirements. This extra bandwidth and functionality (RDMA) can be used for many purposes such as SMB 3.0 storage, Live Migration, backup, and so on.

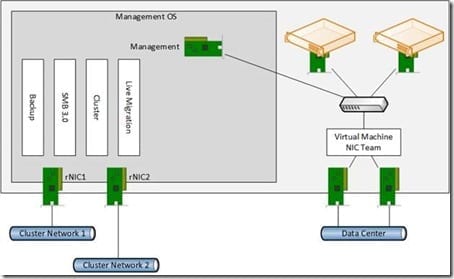

The following design could be deployed on a server to make a WS2012 R2 Hyper-V clustered host with just four NICs. Two NICs are RDMA enabled (rNICs) and will be used for the 2 cluster private networks. The rNICs are not teamed and operate on two different subnets or VLANs. SMB 3.0 Multichannel will aggregate the bandwidth and power features like storage and Live Migration. The other two NICs are teamed, and used to connect virtual NICs to the physical network via the virtual switch.

WS2012 R2 host with converged networks for maximizing SMB 3.0.

Features that Enable Converged Networks

There are a number of features that make converged networks possible. Not all are required in a design, but they can open up possibilities:

- NIC Teaming: The ability to create Microsoft-supported NIC teams was added in WS2012. This will provide load balancing and failover (LBFO) across NICs and TOR switches (network paths). NIC teaming can also offer bandwidth aggregation, especially with the new Dynamic load balancing option in WS2012 R2.

- Quality of Service (QoS): QoS was enhanced in WS2012 to be able to provide minimum levels of service to virtual NICs or protocols. QoS rules can use inflexible absolute bandwidth rules or flexible weight-based (think share or percentage) rules to reserve a certain amount of bandwidth for a virtual NIC or a protocol. Rather than being restricted to 1 GbE for example, the virtual NIC or protocol is guaranteed a minimum amount, and can burst beyond that if demand is high enough and there is sufficient uncontended bandwidth on the QoS managed connection.

- Virtual Switch and Virtual NICs: A virtual switch connects virtual NICs to the physical network via a physical NIC or the team interface of a NIC team. Virtual Machines connect to ports on the virtual switch using virtual NICs. The Management OS of a host can have one or more virtual NICs that also connect to the ports of the virtual switch.

There is no one right way to build a converged networks design for a server or a Hyper-V host. There are wrong ways, depending on the features that are required, such as SR-IOV, RDMA, RSS or DVMQ. Converged networks provide more flexibility, easy-to-deploy software-based networking, and introduce a more economical way to deploy higher capacity and feature rich networking.