What You Need to Know About Windows Server 2016 Containers

Microsoft recently announced their intention to add support for the deployment of server applications via containers in Windows Server 2016. In my previous post, I discussed what a container was at a very conceptual level, but if you’re like me, then you were left scratching your head about this technology. Microsoft’s Taylor Brown (Hyper-V team) and Arno Mihm (Operating Systems group) took the stage at Microsoft Ignite to explain what we will get in Windows Server 2016.

The Reason for Containers

Let’s talk about the past so we can understand the future.

- Physical servers: We typically install one application on a single operating system that runs on one server. This is the slowest way to deploy applications.

- Virtualization: We deploy many virtual machines on one physical host. There is one guest OS in each virtual machine, and we normally install one service in each virtual machine. These virtual machines are pretty quick to deploy, but they still require OS deployment steps that take some time.

- Server App-V: Odds are that you have only heard of this Microsoft technology if you deployed Service Templates using System Center Virtual Machine Manager (SCVMM). Applications are deployed using a bubble. This simplifies the deployment a little more, but there’s still one application per guest OS in a virtual machine, and Server App-V does not provide any additional application isolation.

If you work in a DevOps environment or if you have colleagues that are intensive test-dev types, then all of these scenarios are frustrating for them. Physical servers are a complete no-no for these folks because of the glacier-like speed of deployment. Virtualization improved things quite a bit, but how many of you deployed true private clouds that offered the speed of deployment that devs want? Containers recently started to make headlines in the open source world and Microsoft, through a tight partnership with Docker, has jumped on this ship early to add container support natively into Windows Server 2016.

What are Containers?

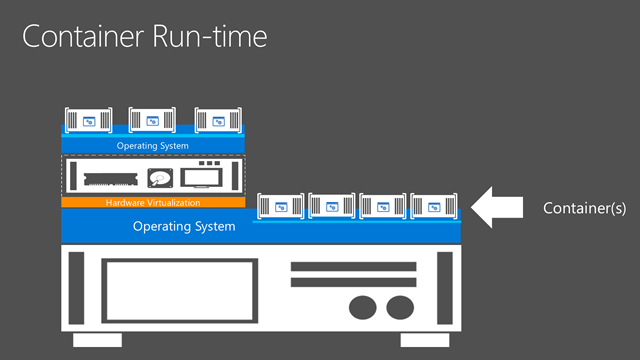

The primary concept regarding containers is that you can run many isolate applications on a single operating system, be it a physical or virtual installation. Each container is an application that is deployed from an image repository. This makes containers reusable and very easy to deploy.

- Related: What is Docker?

When they run, the containers are isolated from each other, even on the same operating system. There is no inter-process communications. The containers use the virtual switch on the machine to communicate via a per-container IP/MAC address combination or via a NATed IP address. This provides the isolation that you do not get from Server App-V.

Building a Container Ecosystem

The real benefit is the granular reusability of containers. Let’s look at how you would build a container ecosystem.

You start off, possibly using the Docker client for Windows, by deploying a new container from an image in an image repository. This repository could be local on your server or be a centralized network library. This first image would be an image for an operating system, such as windowsservercore that was shown at Ignite. This is not a deployment like you might understand it in a virtual machine. The container refers to a read-only copy of the original image in the repository, and there’s no actual local storage of the container’s image.

The container is stateless. In other words, if you power off the host, then you lose what’s in the container. This is why you might have to attach external storage for systems, such as a web farm or SQL Server. The container has a sandbox, where all writes are saved. When you create the container, you specify if the sandbox will be saved or lost when the container is closed, which is typically done to create new images.

You might decide to install a runtime, such as .NET, into your OS container. The writes are saved into the sandbox, leaving the original OS image in the repository unmodified. If you save the sandbox, a new image is created in the repository for the runtime only. Now you have a runtime that you can easily deploy; you create a new container from the runtime image, and it will automatically deploy the required lower layer images, i.e. the OS image.

This process is how we get to reuse our containers. You might deploy the same OS image into several containers and deploy different runtimes into each container. Whenever you need a runtime, you deploy it into a new container and the required OS is deployed.

Eventually you will install or create a new application that requires a runtime. You deploy the runtime image into a new container and then by using a docker file, you install the application into the container. As before, the installation goes into a sandbox and does not change the lower runtime or OS images in the repository. If you save the sandbox, then you will create a new image for the application. Now developers, testers, operators, and administrators can quickly deploy the application by selecting the image from the container repository, and the required runtime and OS images will be deployed beneath the application image in the container, and a sandbox will redirect the writes.

Containers are Stateless

Because containers are stateless, there’s no live migration, and there’s no failover. They are intended for true born-in-the-cloud or stateless applications that offer HA at the application layer. You can connect external storage, such as the server’s disk or an SMB 3.0 share, and this should enable Microsoft to support services such as IIS and SQL Server to run their engines in containers while keeping their data externally.

Not all applications will be suitable for containers, and this is why we still have the option to deploy Hyper-V virtual machines.

Hyper-V Containers: Splendid Isolation

The technology that I’ve talked about so far is called Windows Server Containers. Containers running in the same OS are isolated from each other, but there is a commonality. They all have a shared view of the underlying operating system. If the application of a container becomes compromised, then there’s a chance of a break-out attack against the host.

This is why Microsoft will be offering another alternative to Windows Server Containers called Hyper-V Containers. Microsoft isn’t talking too much about this because it’s very early days, but this is what we know:

- You will need a hypervisor on your physical host that supports the virtualization of the VT instruction set. You might read this as, “it supports nested virtualization.”

- You will then deploy a Windows Server 2016 virtual machine and enable Hyper-V in that virtual machine. In there, you will run one or more containers that will leverage hardware-supported isolation to prevent breakout attacks.

- Images will be compatible between both kinds of containers, which is a deployment time decision.

This sounds very interesting and might lead to some very nice tangential consequences that Hyper-V followers could end up being very happy about — no promises, though!

When are Containers Coming to Windows Server 2016?

The current build of Windows Server 2016 Technical Preview 2 does not have container support. However, you will see support for Windows Server Containers in the summer, and Hyper-V Containers are planned to debut before the end of the calendar year.