For past couple of years VMware has been yelling from the rooftops about how great vCenter Operations Manager (vCOPS) is. I do have to admit that I’ve seen many happy customers and vCOPS has to be one of the better-selling VMware products behind vSphere. But as vCOPs grows into a more robust product there are some parts that are a bit of a letdown. I’ll cover the main features in vCOPs and break down where I think the product excels and disappoints.

vCenter Operations has grown from the original management product into a suite of products that deals with reporting and compliance. I will be focusing on the manager product that is the most popular and the most implemented product in the suite.

What Is vCOPs?

The vCenter Operations Manger product is a that offers detailed insight into the performance, health, and capacity of your infrastructure.

What it does well: The performance reporting and visuals is the bread and butter of the vCOPs manager product. It does performance really well and is very visually appealing. It’s also pretty simple to work with and the learning curve for getting started is pretty low.

What it could do better: The capacity planning a waste finding functions within vCOPs are important features. While I think most customers purchase for the performance and health features, they do look forward to what the product might offer them in the way of capacity planning and management. The bad news is that pretty much every customer is disappointed when they dig in and try to use these features.

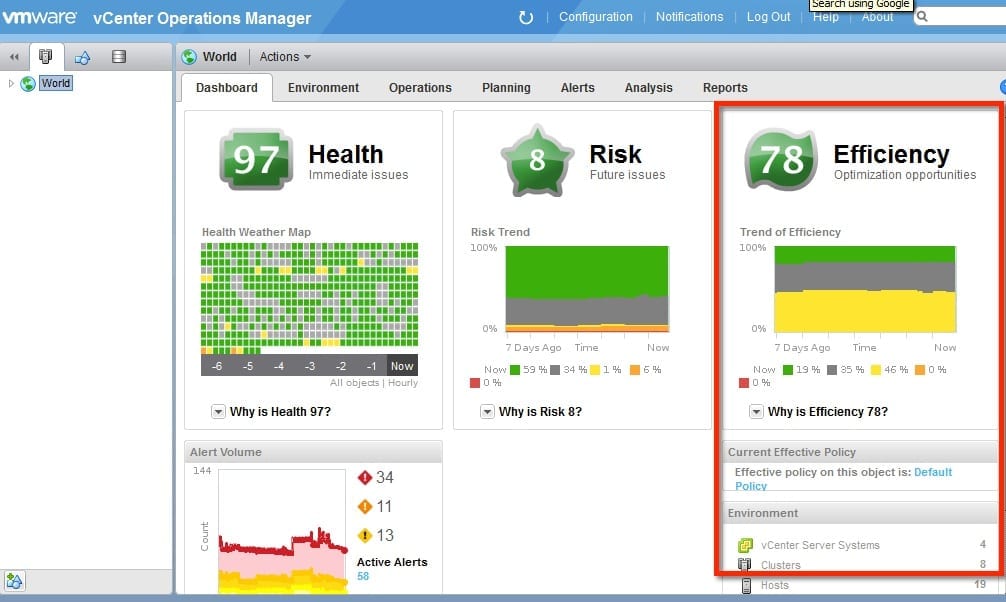

The image below highlights the Efficiency section. I have grabbed this image from a lab environment. The VMs are sized properly and running sample workloads. The measurements are working fine in this scenario, but in most customer installs the tool usually comes back reporting that they are 90+ percent over provisioned.

Don’t get me wrong – the capacity features are not a complete loss, it’s just that they could be so much better. To me this stems from the capacity functions being more of a trigger- or percentage-based method, rather than the algorithmic method used by vCOPs for performance reporting.

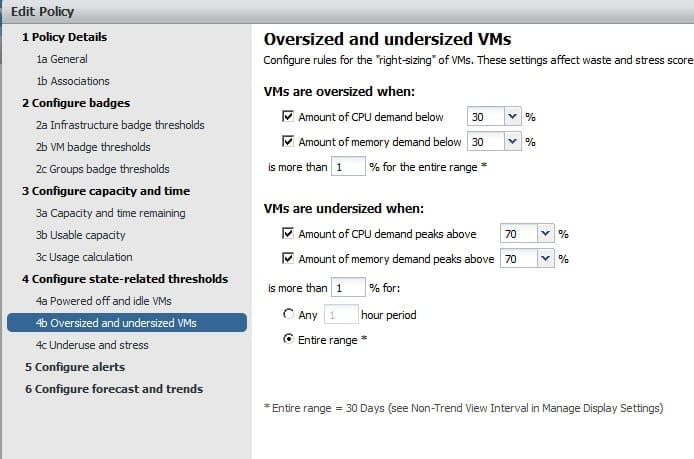

The image below shows the configuration screen that you can set the values that determine oversized or undersized VMs. These are just percentage values and if the average value for the metric of a VM violates one of these the VM is listed as too big or too small. Not the greatest method for this. As you can imagine that VMs are built for different types of workloads, some that run steady and others that have large peaks for short windows. This will create confusion for you when trying to use the capacity feature.

My hope for upcoming versions is that VMware is able to use some type of algorithm that analyzes each VM and determines whether it is oversized or undersized. This would be a much better way than using firm values that don’t work well for a wide variety of configurations.