System Center Virtual Machine Manager SoFS: Configuring the Fabric

We’re back with our new series on System Center Virtual Machine Manager 2012 R2 (SCVMM 2012 R2) and Scale-Out File Server (SoFS). In part one, we built a virtual SoFS lab. Now that our new SoFS and Storage Spaces lab VMs are ready to establish their new role, we will move our focus over to SCVMM 2012 R2 and use these nodes in conjunction with Virtual Machine Manager (VMM), as we take the opportunity to exercise some of its great new fabric features.

We have essentially two main objectives now to accomplish

- Deploy a SoFS using Virtual Machine Manager

- From a Storage Space, carve out an SMB3 share for hosting our VMs

For all of the steps we are going to follow, assume that you will be authenticated to VMM as an administrator or as a user with delegated permissions to manage the fabric. There are a few prerequisites we need to establish in VMM prior to using the wizard to enable our SoFS, so lets get started.

Prepare to Enable Scale-Out File Server (SoFS)

Working from the Fabric view in VMM all of our initial work will be on the Network scope of the navigation tree.

The following procedures need only be completed. If you do not have a pre-created logical network and IP pool for the network to which you will be deploying your SoFS (generally this will be in the form of either a management or storage network, either of which may already be in use if your VMM environment has preexisting services), simply come back for the next post!

Logical Network

Adhering to the rules of networking in SCVMM 2012 SP1 and newer, we will first establish a new logical network. The type of network will really depend on your lab configuration; however, it is quite likely you will need only select the option VLAN Networks from the wizard. In my demonstration I am going to assume that my Management Network on VLAN 110, with IP Address space of 172.21.10.0/24, is where I will be hosting my SoFS.

- Right-click on the Logical Network node in the navigation tree, and select the option Create Logical Network.

- On the create logical network wizard, you must first provide in the Name text field, a name for this fabric network. For example, something simple and descriptive like Management.

- You will be offered three different types of networking. Select the one that you believe reads most appropriate. In my case, this is VLAN-based independent networks. Then click Next.

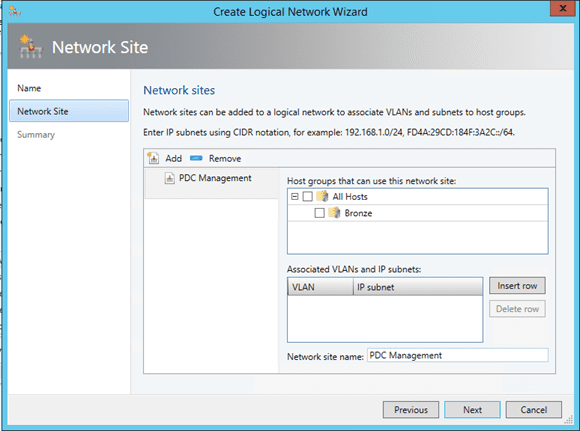

- On the Sites page of the wizard, click Add, which will insert a default site definition that we will customize to represent our management network.

- In the text field Network Site Name, at the lower-right of the dialog replace the default test with PDC Management (to represent our PDC Sites, Management Network).

- Just above this field, in the Associate VLANs and IP Subnets you have the opportunity to provide both the VLAN and IP subnet which define the site. Click Insert Row.

- In the VLAN field, I am supplying 110 to match my network

- Note: If you are using VLAN 0, or no VLAN tags to your knowledge, then enter 0 in this space. (You can leave this blank, but there are some known issues that trace back to this field not having been manually initialized. So for now I recommend that you do not fall into that trap, and be explicit.)

- In the IP subnet field, you can now supply the IP address space for this network. For example, I will be using 172.21.10.0/24.

- Finally, in the upper-right of the dialog you are offered the ability to select which Host Groups can access this network site. As we have not focused on configuring these, or even have the ability to really define the Host Group for a SoFS, I will recommend that you simply select All Hosts for now. (Again this is a lab, and we can always come back and fine-tune configuration settings before trying to move to production).

- You can now click Next to complete the wizard, after which you should see the main window in VMM update to present your new logical network.

IP Pool

With the logical network in place, we need to next create an IP pool for this network. Setup is again quite trivial; however, you should keep some considerations in mind as you complete this exercise. Generally we will create an IP pool that will span the full scope of the network to which it is attached. However, this network should not be using both DHCP and IP pools at the same time. If, however, this is the case, make an exclusion to allow for the IP addresses that are issued by the active DHCP scope.

Additionally, as we are deploying to a preexisting network, we can safely assume that other nodes are already deployed to this network, and have IP addresses assigned. You will need to know what these are and manually enter them as exclusions in the IP Pool. In my example, I will be excluding all the addresses from .1 to .99, as these are currently assigned to my servers on this network. This consideration is also a little easier if you have an IP Address Management tool, for example Microsoft Windows Server 2012 IPAM integrated with VMM.

- Right-click on your previously created logical network, for example Management and from the context menu select the option Create IP Pool. The Create Static IP Pool wizard will then launch.

- On the main page, you should see that the the logical network name is preselected.

- In the Name field, provide a friendly name for the pool, such as Management IP Pool.

- On the Network Site page, the associated network site name and IP subnet are already prepopulated for you. You can, of course, adjust these to suit you. If they appear to be correct, click Next.

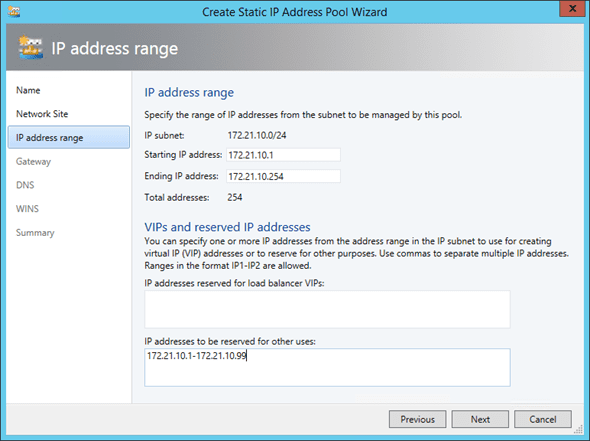

- On the IP Address Range page, you will define the IP range for the pool as well as the ability to define two different exclusion types. In the Starting IP address and Ending IP address fields, supply the full range for your subnet.

- In the Load Balancers VIPs area you have the option to select a range of IPs, which will be reserved in the pool for this network to be used solely with load balancers. For the purpose of our lab we can leave this empty.

- In the General area, we will place the list of IPs that we want to exclude from the pool, in a comma-separated list, or using the dash (–) if you have a consecutive number of addresses. For example, I will enter 172.21.10.1-172.21.10.99 to exclude all the addresses from 1 to 99 on my network.

- On the Gateway page, you can supply the IP of the default gateway for your subnet.

- Next on the DNS page, supply the IP address of your DNS server, your DNS suffix, and search order list. You should fill this in to match your networks configuration. However, for the purpose of our lab this information will not be utilized while creating the SOFS.

- On the WINS page, I generally ignore it and just click Next

- Finally, read the Summary and click Finish to create the pool.

After completing both wizards, you are ready to move forward with the real task of implementing your SoFS with SCVMM. We have paid a little more attention to this area than truly required, but the logical network and its associated sites and IP pools are core to the true functionality of SCVMM, especially while working with VMs, so it never hurts to have context with your decision making

So, as we wrap up this work, you may be asking yourself, what was the true purpose of the work we just completed?

That is actually pretty simple to answer, as you will discover in the next section the wizard will create a cluster from our SoFS nodes, and for this cluster it will implement the role of Scale-Out File Server. Importantly, both of these new elements will require a new IP address to be assigned, and as with all network-related activities in VMM, we must have a underlying logical network defined for VMM to successfully complete its work.