New Features in Windows Server vNext

Microsoft recently released the Technical Preview of Windows Server vNext, known formally as “the Next Release of Windows Server.” There was little fanfare about this release, just a few blog posts and some summary TechNet articles. Even at the recent “future of the cloud” event in San Francisco, hosted by Satya Nadella and Scott Guthrie, and the TechEd Europe 2014 keynote, very little was mentioned of the next release of the cash cow enterprise stalwart. Only those who watched sessions that focused on Windows Server vNext have heard snippets of information about what Microsoft is working on. If I could summarize it in one phrase, then it would be “software-defined everything.”

Windows Server vNext

The current release of Windows Server is Windows Server 2012 R2, and it will remain that way until at least the summer of 2015. Microsoft has performed a complete 180-degree turn on the company’s past attitude toward customer feedback. In the past few versions, there was no process or publicly displayed desire on Microsoft’s part to accept customer feedback on the preview releases. One might be correct in asserting that Windows 8 has humbled Microsoft, and in September, Microsoft launched a huge customer feedback program, not just for Windows 10, but also for Windows Server and System Center vNext. We expect there will be another significant milestone release in early 2015 and that the release to manufacturing will be in Q2 of 2015.

Software-Defined Everything

The term “software-defined” is closely related to the evolving scalability, flexibility, and cost reduction needs of cloud computing, be it the public cloud, private cloud, or hybrid cloud . Windows Server 2012 introduced Microsoft’s first efforts with the following:

- Software-Defined Networking (SDN): Traditionally we deploy physical network defined VLANs to provide network scalability and tenant isolation. Hyper-V Network Virtualization (HNV) deploys virtual VLANs (yes; I know, that’s double virtualization) so the need to touch the physical layer is removed and we program networks at the software layer. This improves scalability from 4,096 to around 16,000,000 networks and removes the need to involve the costly, mistake-prone, and slow human element in deploying networks on behalf of tenants, and enables those tenants to perform self-service deployments.

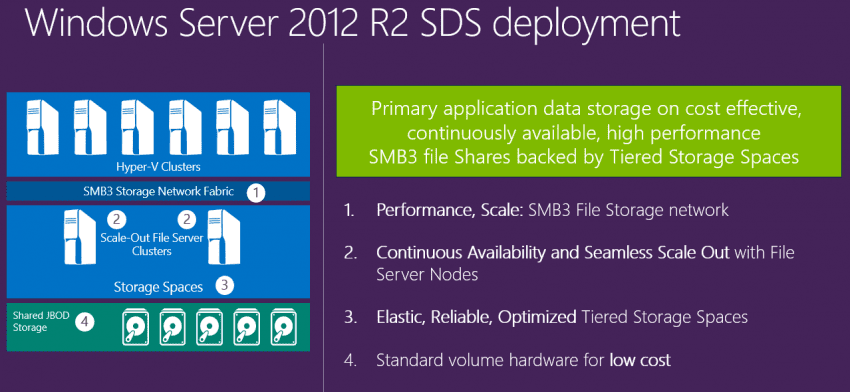

- Software-Defined Storage (SDS): LUNs and SANs are still the norm, but in my experience, Storage Spaces and Scale-Out File Servers (SOFSs) are taking territory, especially in large scale deployments where cost competitiveness is a huge factor in true cloud computing. Deployment is easy and includes a process of creating storage stamps, which uses much lower cost hardware without sacrificing performance. This method also provides great scalability and sustains the same levels of high availability as a SAN.

Over the past couple of years, Microsoft has also been improving Windows Azure Pack (WAP). This product enables System Center-managed Hyper-V deployments to become clouds (public or private) and moves customers closer to a software-defined data center.

What is software-defined compute?

I heard a new term at one of Ben Armstrong’s, (senior Hyper-V Program Manager at Microsoft) breakout sessions at TechEd: software-defined compute. What’s that? That’s virtualization; actually, it’s taking the term ‘compute’ from cloud computing. This could be Hyper-V, web services, and possibly even Docker, which is compartmentalized applications that share an operating system.

Microsoft is moving the data center more toward software and away from expensive proprietary hardware. You can opt to use those premium priced products from OEMs, or you can use off-the-shelf product from lower cost vendors and add intelligence and functionality in the form of software.

Once again, Microsoft has a slew of new features and enhancements in the next release of Hyper-V, but storage and networking have some significant changes.

In networking, the most significant introduction is that of a Network Controller. This seems to me to be a feature that has come from Azure. This software service will provide many features including, but not limited to:

- Load balancing: You do not need to spend tens or hundreds of thousands of dollars on hardware or virtual appliances from third-party vendors. Microsoft is putting load balancing into the fabric of their cloud OS.

- Distributed firewall: The ability to create a layered security in the fabric.

In storage we are getting:

- Shared-Nothing Storage Spaces: Create a cluster from identical servers to create a SATA storage spaces farm. Functionality, scalability, and consistency are provided by a bus that layers on top of the cheap SATA disks. This architecture (supported on prescribed hardware only) will be used to create a SOFS for storing archive data (backup) or second-tier virtual machines.

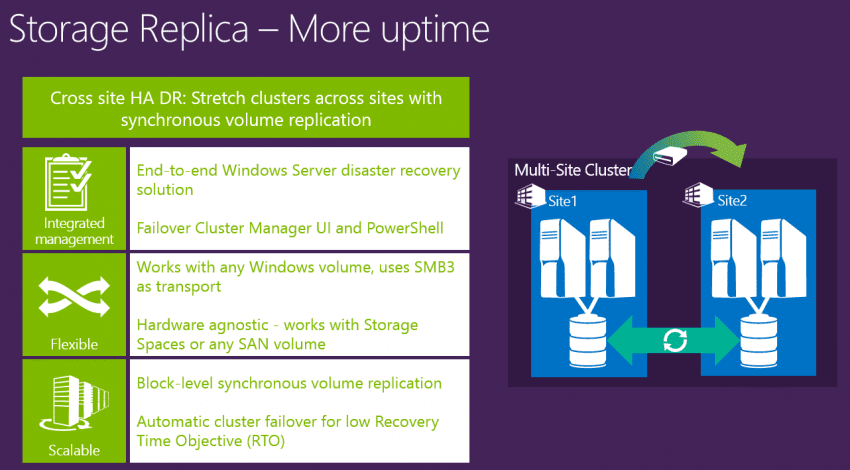

- Storage Replica: This is an exciting development that adds synchronous and asynchronous replication over SMB 3.0 on hardware agnostic storage to stretch clusters between sites. You can remove the need for expensive hardware or third party replication software.

We will be exploring Microsoft’s “software defined everything” data center over the coming months. It seems clear that the cloud OS is built on basic, relatively low-cost hardware, and that Windows Server and System Center will provide the advanced management, fabric, compute, resiliency, and security features. This is truly a new direction for those of us who grew up with big iron.