Expert Tech Insights: Unleashing the Power of Windows, Microsoft 365, and Microsoft Azure

-

Revealed: The Cost of Staying Secure on Windows 10

PodcastLast Update: Apr 16, 2024

- Apr 12, 2024

-

-

Microsoft Teams Gets Divorced (A Global Unbundling)

Podcast- Apr 02, 2024

-

-

5 Reasons to Consolidate Active Directory Domains and Forests

Last Update: Apr 16, 2024

- Feb 14, 2024

-

-

How to Set Up (Microsoft Entra) Azure AD Domain Services

Last Update: Apr 16, 2024

- May 07, 2019

-

-

Petri.com’s New Active Directory Outage and Disaster Recovery Survey

Petri.com was recently asked by Cayosoft to conduct a survey amongst our audience regarding Active Directory (AD) downtime and disaster recovery strategies. Petri.com’s extensive experience in the marketplace, coupled with our standing as a representative voice for IT Professionals, allows us to bring distinct insights into prevailing trends and their evolution over time. The survey,…

Last Update: Apr 16, 2024

Feb 15, 2024 -

How to Find and Block Breached Passwords in Active Directory

Cybercriminals love passwords. They’re simple to guess, easy to steal, and can offer unfettered access to a goldmine of data to hold for ransom or sell to other cybercriminals. For those same reasons, compromised passwords are a constant headache for IT teams, who spend far longer than they’d like helping users reset them and fixing…

Last Update: Apr 16, 2024

Sep 20, 2023 -

How Immutable Backups Protect Against Ransomware

Ransomware protection is one the most important topics for IT Pros and C-Level technology executives. Learn how immutable backups and immutable storage help to protect your organization against data corruption and loss, malware, viruses, and ransomware – and how to implement them. This post is sponsored by Object First Veeam 2023 Ransomware trends report – most ransomware targets backups In May…

Last Update: Apr 16, 2024

Sep 7, 2023 -

Cloud Computing Microsoft Azure

How Azure AD and a Load Balancer Can Simplify App Delivery

This post was sponsored by Kemp Microsoft’s offering in the single sign-on space for several years has been Azure Active Directory, which serves as the underlying directory service for Microsoft 365. This service is also widely used as the SSO and provisioning service compatible with most third-party SaaS applications such as ServiceNow, Salesforce, Atlassian, and many more. In a surprising turnaround from…

Last Update: Apr 16, 2024

Dec 8, 2020 -

Microsoft 365

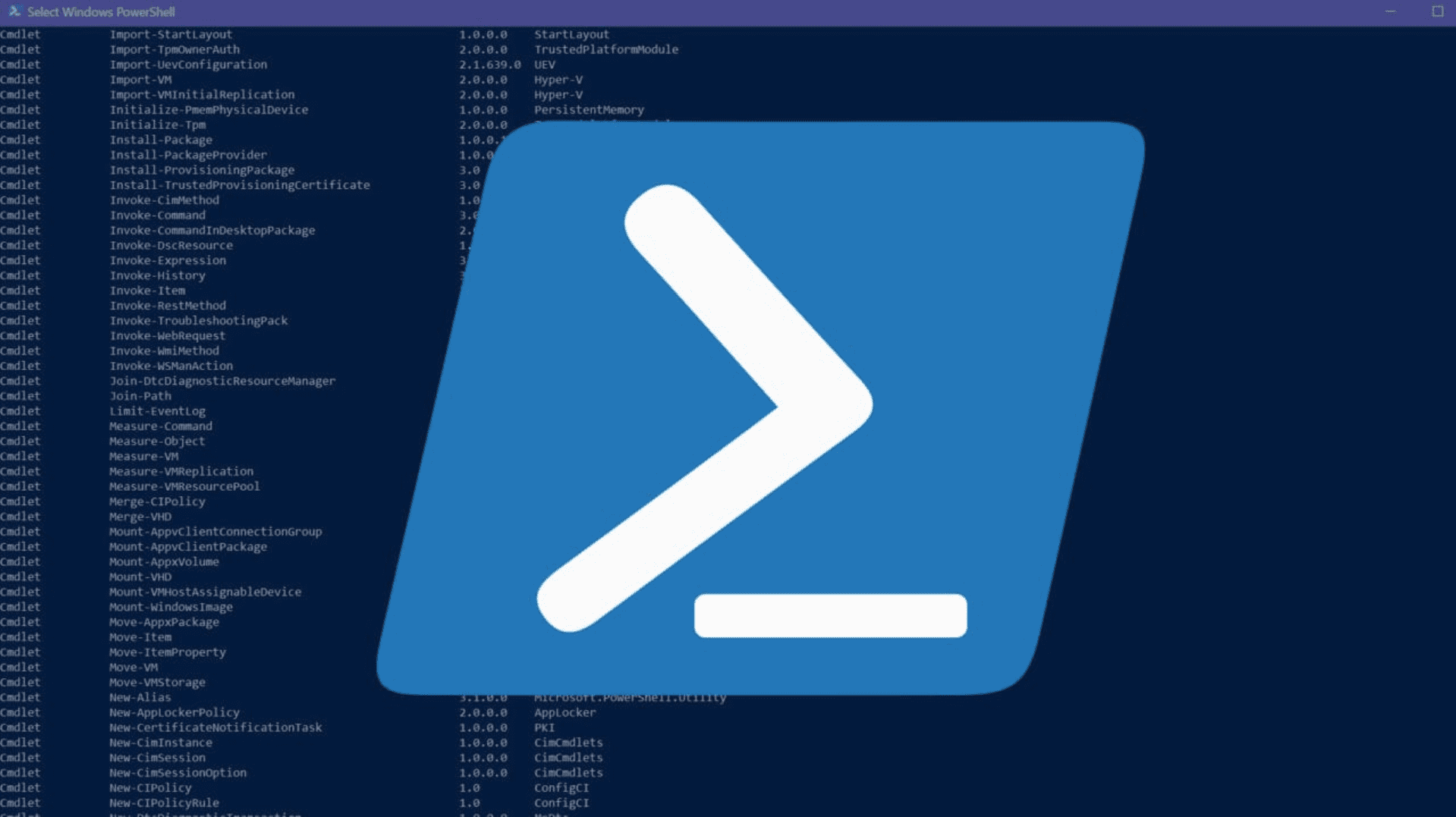

Guide: Using PowerShell to Assist with Backing up Microsoft 365 Data and Settings

If you are aiming for a roll-your-own approach to creating a backup of your data in Microsoft 365, the options are not great for a build-your-own solution. However, when it comes to the configuration of your tenant, there are good options. Even if you’ve bought a backup product for Microsoft 365 or are relying on…

Last Update: Apr 16, 2024

Jun 14, 2021 -

Getting Started with Backing up Data in Microsoft 365, Understanding the Limitations

So, you’ve decided to jump straight into the deep-end and backup your data in Microsoft 365. But first, what does that even mean? Are you planning to back up user’s workstations to OneDrive or embrace the way Microsoft 365 is architected to provide similar or better capacities than a traditional backup product could provide? If…

Last Update: Apr 16, 2024

Apr 28, 2021

Thank you to our petri.com site sponsors

Our sponsor help us keep our knowledge base free.

Petri Experts Spotlight

-

-

Laurent Giret Editorial Manager

- First Ring Daily: Intel Does AI Apr 12, 2024

- First Ring Daily: The Future of Windows on ARM Apr 5, 2024

-

Michael Reinders Petri Contributor

-

-

Michael Otey Petri Contributor

- Install and Use SQL Server Report Builder Apr 10, 2024

- SQL Server Essentials: What Is a Relational Database? Mar 12, 2024

-

Stephen Rose Chief Technology Strategist

- UnplugIT Episode 2 – In The Loop Jun 13, 2023

- Unplugging What’s Next for Teams 2.0 May 30, 2023

-

Flo Fox Petri Contributor

-

Sukesh Mudrakola Petri Contributor

-

-

-

Shane Young Petri Contributor

-

Chester Avey Petri Contributor

-

Sagar Petri Contributor

- How to Create a Dockerfile Step by Step Nov 14, 2023

- What is Amazon Kinesis Data Firehose? Jul 14, 2023

-

-

-

Sander Berkouwer Petri Contributor

-

Bill Kindle Petri Contributor

-

Thijs Lecomte Petri Contributor

-

Sponsored Articles List

-

Petri.com’s New Active Directory Outage and Disaster Recovery Survey

Petri.com was recently asked by Cayosoft to conduct a survey amongst our audience regarding Active Directory (AD) downtime and disaster recovery strategies. Petri.com’s extensive experience in the marketplace, coupled with our standing as a representative voice for IT Professionals, allows us to bring distinct insights into prevailing trends and their evolution over time. The survey,…

Last Update: Apr 16, 2024

Feb 15, 2024 -

Active Directory

How to Find and Block Breached Passwords in Active Directory

Cybercriminals love passwords. They’re simple to guess, easy to steal, and can offer unfettered access to a goldmine of data to hold for ransom or sell to other cybercriminals. For those same reasons, compromised passwords are a constant headache for IT teams, who spend far longer than they’d like helping users reset them and fixing…

Last Update: Apr 16, 2024

Sep 20, 2023 -

Backup & Storage

How Immutable Backups Protect Against Ransomware

Ransomware protection is one the most important topics for IT Pros and C-Level technology executives. Learn how immutable backups and immutable storage help to protect your organization against data corruption and loss, malware, viruses, and ransomware – and how to implement them. This post is sponsored by Object First Veeam 2023 Ransomware trends report – most ransomware targets backups In May…

Last Update: Apr 16, 2024

Sep 7, 2023 -

Cloud Computing

Microsoft Azure

How Azure AD and a Load Balancer Can Simplify App Delivery

This post was sponsored by Kemp Microsoft’s offering in the single sign-on space for several years has been Azure Active Directory, which serves as the underlying directory service for Microsoft 365. This service is also widely used as the SSO and provisioning service compatible with most third-party SaaS applications such as ServiceNow, Salesforce, Atlassian, and many more. In a surprising turnaround from…

Last Update: Apr 16, 2024

Dec 8, 2020 -

Microsoft 365

Guide: Using PowerShell to Assist with Backing up Microsoft 365 Data and Settings

If you are aiming for a roll-your-own approach to creating a backup of your data in Microsoft 365, the options are not great for a build-your-own solution. However, when it comes to the configuration of your tenant, there are good options. Even if you’ve bought a backup product for Microsoft 365 or are relying on…

Last Update: Apr 16, 2024

Jun 14, 2021

PODCASTS

View all Podcasts-

podcast

Revealed: The Cost of Staying Secure on Windows 10

This Week in IT, I look at the recently announced pricing for ESUs on Windows 10 if you want to stay secure beyond the end of support date in October 2025. Plus, the different ways you can get the updates and what it means for your organization. Links and resources Transcript This Week in IT,…

Listen now LISTEN & SUBSCRIBE ON: -

podcast

The Scoop on Loop: The Latest Innovations Directly From Microsoft!

Darrell Webster speaks to Rebecca Keys, a Program Manager from the Microsoft Loop team. Including the latest and greatest that’s arrived in Microsoft Loop. Transcript Hey, this is Darrell Webster for UnplugIT. I had the privilege of being able to go to Microsoft Redmond and for the Microsoft MVP Summit. And there I met with…

Listen now LISTEN & SUBSCRIBE ON:

OUR SPONSORS

-

Cayosoft

Learn more about CayosoftCayosoft delivers the only unified solution enabling organizations to securely manage, continuously monitor for threats or suspect changes, and instantly recover their Microsoft platforms.

-

ManageEngine

Learn more about ManageEngineMonitor, manage, and secure your IT infrastructure with enterprise-grade solutions built from the ground up.