Interview: Microsoft’s Elden Christensen Discusses Windows Server 2012 R2 Storage Features

We’ve written a fair amount about what’s new in Windows Server 2012 R2, but we’ve only scratched the surface of what this significant update contains. One of the biggest areas of improvement is in storage, with the R2 release offering up dozens of new and improved features. To get some additional insight into what Windows Server 2012 R2 offers in the storage feature department, I sat down with Microsoft Principal Program Manager Elden Christensen at TechEd 2013 this year.

Jeff James: There were a lot of storage announcements coming out of TechEd this year. Maybe you could give me a really broad, top-level overview of all the big storage changes that are coming in Windows Server 2012 R2?

Elden Christiansen: Can I take a step back and give you an overview first? Just to give you the context. I think the big thing is that we’re defining what software-defined storage is, and what our vision is on where we’re going with software-defined storage. We talk to a lot of hosters [hosted cloud computing providers], and hosters are really budget conscious. They’re trying to pinch every penny and trying to get all the value they can out of their infrastructure. They have a very different mentality than we see with enterprise customers; they’re much more budget conscious.

With Windows Server 2012 R2 we really shifted our focus to specific scenarios around the private cloud, hosted cloud, and cloud service providers. With that context in mind we thought about storage. There are two big challenges around storage. The first is acquisition cost. We think about customers that have to go out and buy very expensive fiber channel SANs, and they end up spending a lot of money. That’s an inhibitor to hosters and private cloud deployments.

The second thing is operational cost. People have dedicated SAN admins. A dedicated person just to simply attach a server to a disk? That’s crazy. That’s insanity that you have to have a dedicated, highly skilled person just to take a spindle and present some storage to a server. Those are the problems we want to solve. When you look at our vision around the private cloud, we think about the compute layer and Hyper-V systems. We think private cloud or hosting cloud. What hosters want to do is not so much about having scale up, like “I don’t want to go buy a super big, very expensive, high-end beefy box to put lots and lots of money into it, and then, it becomes outdated very quickly.” They want to do scale commodity.

Jeff: Commodity hardware just scaled out.

Elden: Scaled out, but when you do that, you start thinking about the cost of it. That makes sense to do scaled out low-cost commodity, but then to go put expensive fiber channel host-bus adapters (HBAs) in each and every one of those servers? A low-cost commodity, and then I’m going to put expensive fiber channel HBAs in every single one of them?

We wanted to think about how could we maintain that same mentality of enabling those to be low-cost scale outs. In doing that, we think about using Ethernet as the physical topology. The next question is what do we use as a protocol that runs in that topology?

We want to use standard NICs and standard servers that are low cost off the shelf. Then we think about the protocol. Our strategy really is to run SMB. We thought about SMB as being a file-based protocol to run at the scale compute layer that runs against storage. You could almost think of it as a scale out file server. I mean file-based storage almost as a NAS device. It’s a NAS device which services up file-based protocols for all those virtual machines.

That was that strategy. The next strategy is we wanted to enable that NAS device – you can think of it that way – the scale of file server plus storage.

There’s two additional parts: One is we wanted to give the lowest cost possible. One of the things is, we’re not running out and telling our customers to go throw away their SANS. Because the lowest-cost storage is the storage you already have.

The next thing we’re telling customers is this: If you need to go buy new storage, we’re trying to present new lower-cost options around using low-cost storage and software. Software to find storage and really enabling that to become highly available, because if you think of a SAN, exactly what is a SAN? Just a bunch of spindles? They have a PC sitting in front of [that storage hardware] called the controller — it’s just a PC running software, that’s all it is. They put it in a little shrink-wrapped metal [package] and put a little blue light on it, and then they charge you a nice premium.

Jeff: That leads to the question I asked Jeffrey Snover yesterday. There’s this whole ecosystem of storage providers, like HP and NetApp and EMC and all these other vendors who have really done well doing that, providing management software and packaging up storage hardware for a nice premium. Do you see [the new storage features in Windows Server 2012 R2] cutting off the low end of what they’re doing, so people don’t have to go out and buy something like that? They can just use what they have with this new capability?

Elden: We’re trying to provide customers low-cost alternatives. It’s about providing customers the opportunity to have a low acquisition cost for solution to deployment. Some of these [storage appliance vendors] provide a turnkey solution, in the sense of it is everything all in a single box, and some customers prefer that. We definitely embrace that.

They fit into our vision as well, in the sense that you can take an EMC box or an HP box, and that could be your block storage in the back end of your scale file server. We’re fully documenting [everything we’re doing with SMB] through protocol documentation.

I think EMC has already added support. I know NetApp is working on it, and they’re going to have theirs available soon. If customers want to go directly from scale-out file server over SMB directly to an EMC, NetApp, box, they can do it direct. It all fits in our vision still, if that makes sense.

Jeff: There’s a ton of individual new features and functionality with storage in Server 2012 R2. I know we probably don’t have time to go over everything in detail, but maybe you could highlight a few of your favorite new or updated storage features?

Elden: I’ll go bottom-up. On the bottom [with] storage spaces. We did a V1 with spaces in [Windows Server] 2012.

Jeff: Yeah, that was really popular.

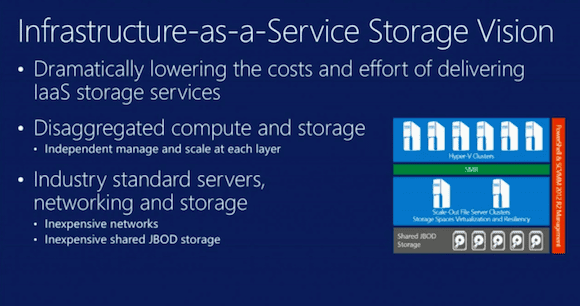

Microsoft’s vision for Infrastructure as a Service Storage in Windows Server 2012 R2. (Source: Microsoft)

Elden: It was popular, but I think that we were missing a few key features. I think tiering is the big one. When people think about serious enterprise class storage, they consider tiering a must-have feature. They go look at an EMC or a NetApp box, and those are a basic, must-have features. That was something we were lacking – what a lot of customers would consider it [needed] to be enterprise class ready. But now we’re there. I think tiering is the biggest one. Write-back caching is another big feature. Those are the two big ones I would think about down on the storage layer.

As we work our way up the stack, dedupe is the other big one. We introduced dedupe with the V1, in 2012, but had some deficiencies. I think the first one being that when it was originally designed, it was designed with offline files intent. When you think of a Hyper-V scenario, that worked great for a VM store or a VM library. Customers were like, “No, no, no, this is incredible savings. I get a 90 percent savings on my storage. I want all that richness and functionality now to be running with Live VHD.” That’s one of the big enhancements now: live VHD support.

Another one is CSV. We see that CSV is the foundation, and not having CSV support was one of the core blockers.

As we work our way up the stack, we’ve done a lot of enhancements in the scale file server around resiliency, improving the resiliency, and better scale-out capabilities. More intelligent moving of SMB handles, more intelligent placement of who owns the space, and who’s doing the coordination with the storage. In the protocol layer, we also did protocol enhancements between the compute and storage. I think about the enhancements we did with SMB 3, actually 3.02 now. We’re still just calling it 3.0 is what you see us refer to it. The RDMA enhancements for the performance…

Jeff: That was something on the [TechEd 2013] keynote with [Microsoft’s] Jeff Woolsey giving a “shock and awe” presentation of all the new storage features – an avalanche of features that just kept coming. It’s like, “OK, we do this, and then we do this.” “By the way, forget that price.” It’s like the Ronco thing.

Elden: Yeah, and then live migration over SMB. [Microsoft’s] Ben [Armstong] does a cool demo. He does a comparison where he does the live migration you know today, then the live migration with compression. What’s funny is I didn’t even consider live migration times that big of a problem. [laughs] Then, it went from “not that big of a problem” to “amazing” with compression, and then SMB.

SMB, from a time-wise perspective, incrementally doesn’t seem that much faster…I’m going to do the repeat of the storage session we did at the Reviewers Workshop. At TechEd he’s going to do a very cool demo of live migration over SMB.

The big difference between compression and live migration over SMB is RDMA. With RDMA, you do all the processing overhead. From a time perspective, on the time it takes to do a live migration, you had a cool screenshot in your post. You did a post, and it was 10 seconds or something. It was an improvement, but it wasn’t an order-of-magnitude improvement.

The real value, as what you’re going to see in Jose Barreto’s blog, is around the CPU cost of that. When you’re doing compression, there’s a CPU cost as it has to compress the data, then send the data, then decompress. With SMB/RDMA, all of those are being off-loaded to the NIC.

He’s doing the live migration. He has Perfmon open, and he shows you Perfmon utilization during the live migration. You see time to live migrate versus CPU utilization. You see that the CPU utilization doesn’t spike, yet bandwidth goes way up. With compression, you’ll see CPU spike up, but you still get performance. That’s the trade-off.

Jeff: As far as working with storage providers, and making sure that all of these new features are going to be supported in hardware, where are some of the areas where you think the partners could do a better job – or, collectively, where Microsoft and your storage partners could do better to roll these features out, and make sure they’re supported? Where are some of the areas where there still needs to be work done?

Elden: Honestly, I think some of the challenges today is backup is still harder than it needs to be. I see opportunities for us to work better together. I think that between what you see in VSS and VDS and the operating system… There’s three parties at play. There’s a trio in that in a sense of we need the ISV, the requester, whether that be VPN, or whether that be Symantec’s Backup Exec or NetBackup, them as the requester, who are initiating the backups.

Then, I think about the operating system being VSS and VDS, and the core infrastructure. CSV has a distributed backup infrastructure. Then, on the backend, being the storage array, they provide hardware snapshots that integrate into VSS, which are then called by the ISV. I think that’s probably one of our fair opportunities of where the three parties could come together to deliver a better end-to-end solution to customers.

Jeff: The other thing that I remember from the [Microsoft Reviewer’s Workshop] is Systems Center and Windows Server being developed concurrently. It seems like a paradigm shift. It used to be all these different groups at Microsoft are off on their own. It sounded like internal development [at Microsoft] was like herding cats. But now it seems like everything, at least in the Server and Tools group [Ed: Now known as the “Cloud and Enterprise” group], has been aligned. Maybe you could talk a little bit about how that process has helped the storage side of things?

Elden: I’ll go there in just a second. There were two aspects of our software-defined storage, one of which we were talking about was the the acquisition cost. I talked a lot about that, but I hadn’t talked at all about the operational cost.

The one thing, which is an important point, is that it used to be, in the past, that when you wanted to provision your storage, or even create your own, you opened up x tool. Then, you wanted to format your drive, and you opened up another tool. Then, you needed to cluster it, and you opened another tool. Then, you needed a VM on it, and you opened yet another tool. We had this very fractured experience where you were jumping between different tools, where you could see the org chart in the product. There was this lack of an end-to-end experience.

One of the huge things we accomplished in this release is that now you can open VMM, and that entire stack I just described, all the way from the storage whether that be Spaces – or, if you want to stick with SANs, we will manage that, too – to the operating system, and creating volumes, and formatting file systems, and creating CSV, and creating the file server on top of it, and then provisioning the Hyper V servers, and configuring SMB between, and ACLing SMB. Wiring all that up is huge. It’s all now a single pane of glass. That’s about reducing those operational costs.

That’s just on the one thing. Now, let me answer your question. One of the challenges we’ve always had is that we had different ship cycles between the server and virtual machine manager (VMM). The biggest challenge with that was that we would ship the server, and customers we would be like, “Adopt!” They would look at us and be like, “But I don’t have the management tool to deploy it.” They would look at us like we were crazy. That was always hard.

Jeff: Also, there was a period where you couldn’t use Systems Center 2012 with Windows Server 2012. You had to wait for…

Elden: … a service pack. You’d wait for a service pack, and it would come. They were just on different ship cycles. You would see a couple of different problems. One would be we would deploy the server, we’d hold these great TechEds, and we’d, “Rah rah rah, go deploy.” Then, customers would be like…

Jeff: “…now what?”

Elden: “But I don’t have a management tool. How am I supposed to deploy without a management tool?” That was fair. We’ve aligned that. Now, we’ve [made it so that when] you have all these great storage features, and they talk about that you can manage it end to end, you actually can manage it end to end, and not manage it end to end 6 to 12 months later. You don’t have to wait until a service pack, so that’s a big thing.

Organizationally, internally, [Microsoft product teams] have been aligned in the sense of we used to be two different organizations, and now we’re internally organized. Even physically, we used to be in different buildings. Now, we’re in the same building, we’re just on different floors. They have organizationally brought us together, physically brought us together, and as a result, you can see the results.

Jeff: That’s a big organizational change.

Elden: You now have a single experience, a single paradigm.

Jeff: What has been the reaction from Windows Server customers and the community about all of these news Windows Server 2012 R2 storage features? What are they most impressed by? What are they most excited about?

Elden: What I see is the excitement is around the storage and the storage enhancements, and also [all the new features] in the next release of Hyper-V. I think those are the two big ones people are coming up and asking about. They’re excited about Hyper V replica improvements, Gen 2 VMs, line migration improvements, and then on the storage side.

Actually, what’s interesting is at TechEd last year, a lot of people coming up and asking about it. We had this philosophical shift towards file-based storage, and there were a lot of people coming and going, “What is this? What does it mean?” being kind of apprehensive about running apps over SMB. Now I see people coming up a year later, showing up and saying, “Oh, I actually have a file scale server employed. I’m actually doing it, I’m actually trying it, and I like it.”

There’s been a big shift over the last 12 months. In fact, that’s actually one of the biggest things, is that 12 months ago when we shipped 2012, the idea of running applications over SMB, most people would have laughed at you. If you compare the performance enhancements, SMB 2 was 46 percent of line speed, now we’re at 98 percent of line speed. That doesn’t even talk about multi channel RDMA or all the other cool stuff. That’s just flat-out SMB 2, compared to SMB 3.

It’s the whole idea of embracing this bound data storage strategy or direction we’re moving towards. People, 12 months ago thought we were crazy, and now all of a sudden, people are embracing it. It’s a totally different conversation we’re having with customers on the floor.

We’d like to thank Elden Christensen for taking the time for this interview. For more on the new storage features in Windows Server 2012 R2, we’ve posted some articles describing the new features in storage spaces in Windows Server 2012 R2, new failover clustering features, and more information on SMB 3.0 and scale-out file servers.