Over the past few weeks, I’ve discussed converged networks and I’ve shown you how to design a converged network. In this article I will set out some scenarios that will lead you to the design of some alternative converged network implementations in Hyper-V hosts.

Converged Network Designs: Non-Clustered Host With SMB 3.0 Storage

In this example, a hosting company is deploying a new public cloud based on Windows Server 2012 (WS2012) with an eye on eventually upgrading to Windows Server 2012 R2 (WS2012 R2). The following table translates customer requirements into design features.

| Requirement | Design |

| Scale-Out File Server storage | Two networks will be required because it’s a requirement for SMB Multichannel to use two NICs on a cluster node. |

| SMB Direct | RDMA-capable NICs (rNICs) are required for the storage networks. |

| Rapid mobility | Live migration must happen quickly. The hosts will be densely populated so VMs must drain quickly during maintenance. Merge the live migration network with the storage network to avail of 10 Gbps NICs. |

| Tenant isolation | The tenants of this public cloud will be physically isolated. This means that infrastructure networks cannot be converged with the VM network. |

| Maximized convergence | The management, cluster, and backup networks can be converged with live migration and storage. |

| Densely populated hosts | This is a good indication that dVMQ should be used on the VM network. |

| RSS/dVMQ | RSS will be used to maximize the effectiveness of SMB Multichannel on each storage network. This is incompatible with dVMQ which will be used on the VM network. |

| WS2012 R2 | This design can be carried forward to WS2012 R2 and allow both storage networks to be used for hardware offloaded SMB (Direct) live migration running at up to 20 Gbps. |

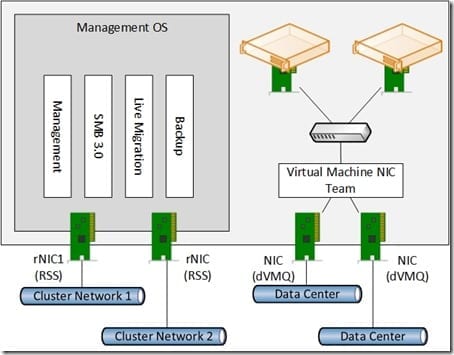

The resulting design will be as depicted below:

A non-clustered host with fault tolerant virtual machine and SMB 3.0 networking.

Data Center Bridging (DCB) will be used to enforce QoS rules on the two rNICs for each protocol. This is a hosting company, so maximum bandwidth QoS rules might be deployed to the virtual NICs of the tenant virtual machines.

Clustered Host with Minimized Fault Tolerance

A public cloud service provider is deploying a WS2012 R2 Hyper-V cluster where fault tolerance is provided only by having highly available hosts. This will minimize per-server capital and power costs, and stress the need to build high availability at the application layer.

| Requirement | Design |

| SMB 3.0 Storage | The design shoud benefit SMB 3.0 storage, and allows for storage networking to be converged with other networks. |

| Maximized convergence | All host networks will be merged onto a single rNIC that is being used for storage. |

| 10 Gbps converged networking | 10 Gbps networks will be used to converge networking of the host. |

| Minimized infrastructure spend | Fault tolerance will be done using failover clustering at the host level, and application design at the guest level. No NIC teaming will be used. |

| Maximize host performance | RSS will be enabled on the host NIC and dVMQ will be enabled on the VM NIC. |

| Constrain virtual machine networking | This is a rare circumstance in which each virtual machine NIC will have a maximum-bandwidth-allowed QoS rule. |

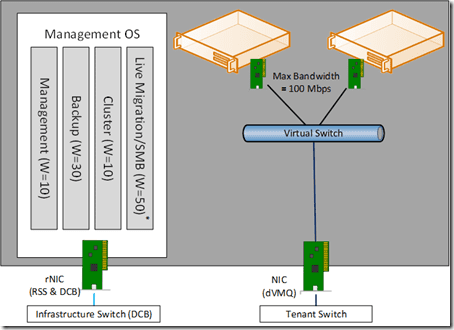

The resulting design will be as depicted below:

A clustered host with no built-in hardware fault tolerance.

DCB will be used to enforce QoS on the host NIC because of the use of RDMA for SMB Direct storage and live migration.

Upgrading a 1 Gbps Network Host to WS2012 R2

A client wants to upgrade their existing hosts to WS2012 R2. The hosts have the classic four on-board NICs with an added 4-port expansion card.

| Requirement | Design |

| Re-use iSCSI SAN | Two iSCSI networks with MPIO will be required. |

| iSCSI Support Requirements | The SAN manufacturers require two VLANs on two dedicated switches for iSCSI. This rules out converging the iSCSI networks. |

| Add network Path Fault Tolerance | NIC teaming will be used where required. |

| Maximize convergence | The remaining networks on the clustered host will be implemented as management OS virtual NICs and share a single NIC team with the VM network. |

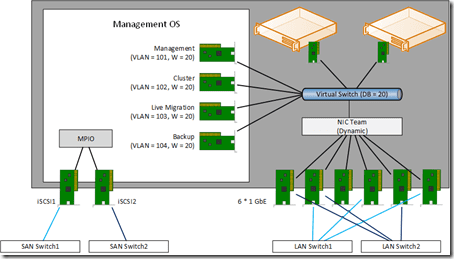

The resulting design is as shown below:

A clustered host with eight * 1 GbE NICs.

There is no need for QoS on the iSCSI NICs. QoS will be enforced by the virtual switch to guarantee minimum levels of service for the remaining host networks.